Hashidays 2017 – London: a personal report

I have lately started a tradition of copying/pasting reports of events I attend for the community to be able to read them. As always, they are organized as a mix of (personal) thoughts that, as such, are always questionable …. as well as raw notes that I took during the keynotes and breakout sessions.

You can find/read previous reports at these links:

Note some of these reports have private comments meant to be internal considerations to be shared with my team. These comments are removed before posting the blog publicly and replaced with Redacted comments.

Have a good read. Hopefully, you will find this small "give back" to the community helpful.

_________________________

Massimo Re Ferré, CNA BU - VMware

Hashidays London report

London June 12th 2017

Executive summary and general comments

This was in general a good full day event. Gut feeling is that the audience was fairly technical, which mapped well the spirit of the event (and HashiCorp in general).

There had been nuggets of marketing messages spread primarily by Mitchell H (e.g. “provision, secure, connect and run any infrastructure for any application”) but these messages seemed a little bit artificial and bolted on. HashiCorp remains (to me) a very engineering focused organization where the products market themselves in an (apparently) growing and loyal community of users.

There were very few mentions of Docker and Kubernetes compared to other similar conferences. While this may be due to my personal bias (I tend to attend more containers-focused conferences as of late), I found interesting that there were more time spent talking about HashiCorp view on Serverless than containers and Docker.

The HashiCorp approach to intercept the container trend seems interesting. Nomad seems to be the product they are pushing as a counter answer for the like of Docker Swarm / Docker EE and Kubernetes. Yet Nomad seems to be a general-purpose scheduler which (almost incidentally) supports Docker containers. However, a lot of the advanced networking and storage workflows available in Kubernetes and in the Docker Swarm/EE stack aren’t apparently available in Nomad.

One of the biggest tenet of HashiCorp’s strategy is, obviously, multi-cloud. They tend to compete with some specific technologies available from specific cloud providers (that only work in said cloud) so the notion of having cloud agnostic technologies that work seamlessly across different public clouds is something they leverage (a ton).

Terraform seemed to be the special product in terms of highlights and number of sessions. Packer, Vagrant were hardly mentioned outside of the keynote with Vault, Nomad and Consul sharing almost equally the remaining of the time available.

In terms of backend services and infrastructures they tend to work with (or their customers tend to end up on) I will say that the event was 100% centered around public cloud. (Redacted comments).

All examples, talks, demos, customers’ scenarios etc. etc. were focused on public cloud consumption. If I have to guess a share of “sentiment” I’d say AWS gets a good 70% with GCP another 20% and Azure 10%. These are not hard data, just gut feelings.

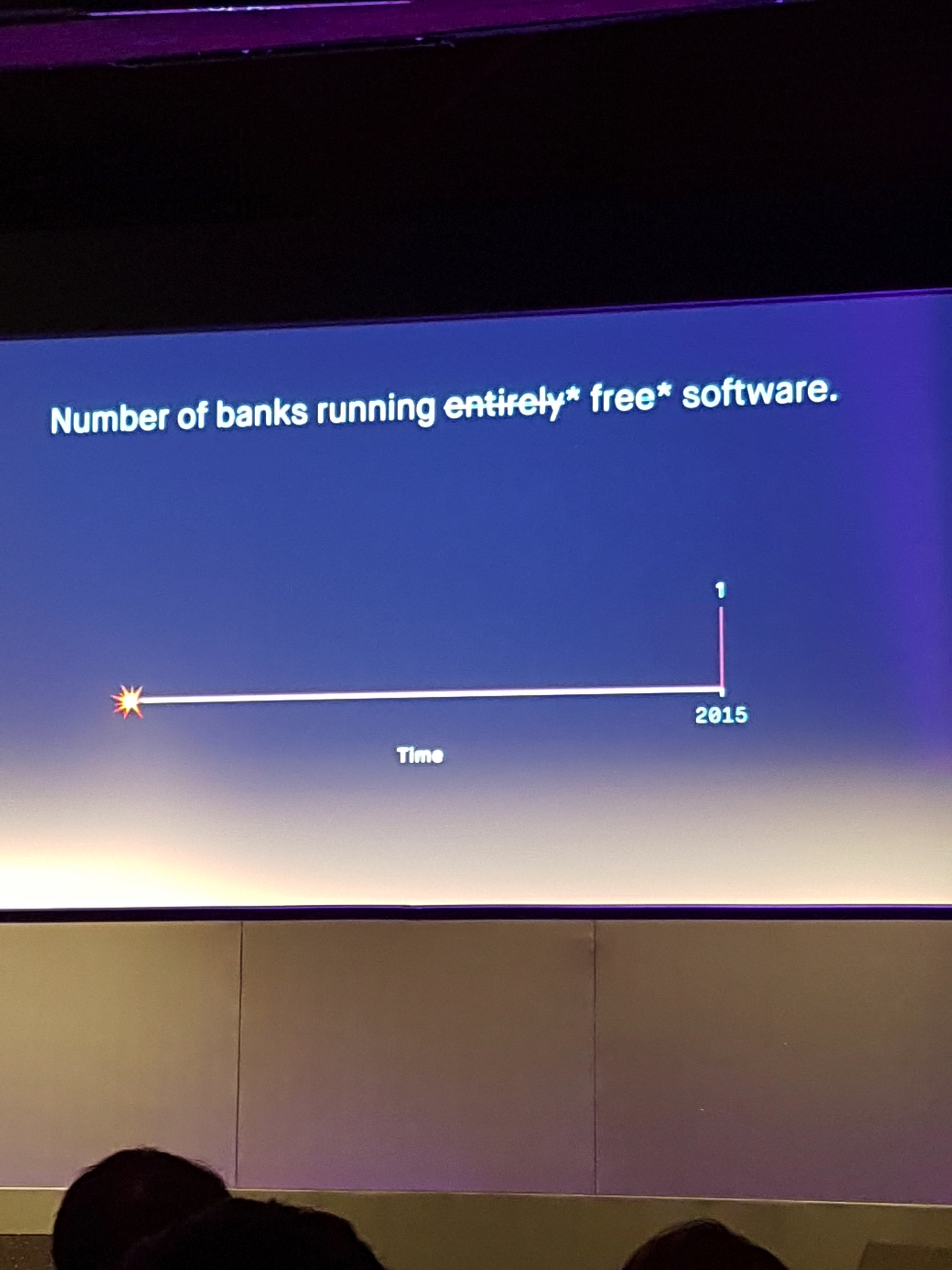

The monetization strategy for HashiCorp remains (IMO) an interesting challenge. A lot (all?) of the talks from customers were based on scenarios where they were using standard open source components. Some of them specifically proud themselves for having built everything using free open source software. There was a mention that at some point this specific customer would have bought Enterprise licenses but the way it was phrased let me think this was to be done as a give-back to HashiCorp (to which they owe a lot) rather than specific technical needs for the Enterprise version of the software.

Having that said there is no doubt HashiCorp is doing amazing things technologically and their work is super well respected.

In the next few sections there are some raw notes I took during the various speeches throughout the day.

Opening Keynote (Mitchell Hashimoto)

The HashiCorp User Group in London is the largest (1300 people) in the world.

HashiCorp strategy is to … Provision (Vagrant, Packer, Terraform), secure (Vault), connect (Consul) and run (Nomad) any infrastructure for any application.

In the last few years, lots of Enterprise features found their way into many of the above products.

The theme for Consul has been “easing the management of Consul at scale”. The family of Autopilot features is an example of that (set of features that allows Consul to self-manage itself). Some of such features are only available in the Enterprise version of Consul.

The theme for Vault has been to broaden the feature set. Replication across data centers is one such feature (achieved via log shipping).

Nomad is being adopted by largest companies first (very different pattern compared to the other HashiCorp tools). The focus recently has been on solving some interesting problems that surface with these large organizations. One such advancement is Dispatch (HashiCorp’s interpretation of Serverless). You can now also run Spark jobs on Nomad.

The theme for Terraform has been to improve platforms support. To achieve this HashiCorp is splitting the Terraform core product from the providers (managing the community of contributors is going to be easier with this model). Terraform will download providers dynamically but they will be developed and distributed separately from the Terraform product code. In the next version, you can also version the providers and require a specific version in a specific Terraform plan. “Terraform init” will download the providers.

Mitchell brings up the example of the DigitalOcean firewall feature. They didn’t know it was coming but 6 hours after the DO announcement they did receive a PR from DO that implemented all the firewall features in the DO provider (these situations are way easier to manage when community members are contributing to provider modules if these modules are not part of the core Terraform code base).

Modern Secret Management with Vault (Jeff Mitchell)

Vault is not just an encrypted key/value store. For example, generating and managing certificates is something that Vault is proving to be very good at.

One of the key Vault features is that it provides multiple (security related) services fronted with a single API and consistent authn/authz/audit model.

Jeff talks about the concept of “Secure Introduction” (i.e. how you enable a client/consumer with a security key in the first place). There is no one size fits all. It varies and depends on your situation, infrastructure you use, what you trust and don’t trust etc. etc. This also varies if you are using bare metal, VMs, containers, public cloud, etc. as every one of these models has its own facilities to enable “secure introduction”.

Jeff then talks about a few scenarios where you could leverage Vault to secure client to app communication, app to app communication, app to DB communications and how to encrypt databases.

Going multi-cloud with Terraform and Nomad (Paddy Foran)

Message of the session focuses on multi-cloud. Some of the reasons to choose multi-cloud are resiliency and to consume cloud-specific features (which I read as counter-intuitive to the idea of multi-cloud?).

Terraform provisions infrastructure. Terraform is declarative, graph-based (it will sort out dependencies), predictable and API agnostic.

Nomad schedules apps on infrastructure. Nomad is declarative, scalable, predictable and infrastructure agnostic.

Paddy is showing a demo of Terraform / Nomad across AWS and GCP. Paddy explains how you can use output of the AWS plan and use them as inputs for the GCP plan and vice versa. This is useful when you need to setup VPN connections between two different clouds and you want to avoid lots of manual configurations (which may be error prone).

Paddy then customizes the standard example.nomad task to deploy on the “datacenters” he created with Terraform (on AWS and GCP). This will instantiate a Redis Docker image.

The closing remark of the session is that agnostic tools should be the foundation for multi-cloud.

Running Consul at Massive Scale (James Phillips)

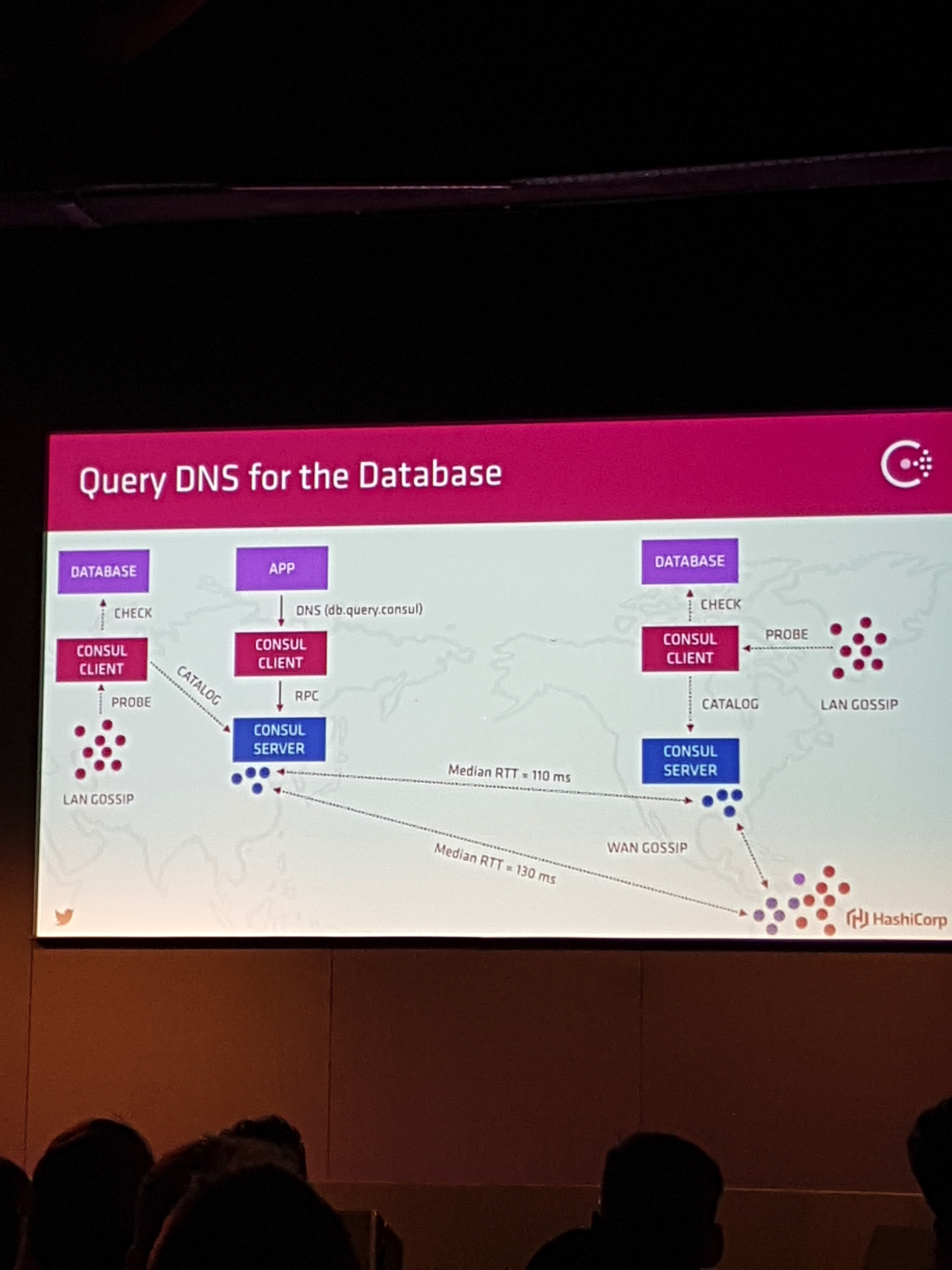

James goes through some fundamental capabilities of Consul (DNS, monitoring, K/V store, etc.).

He then talks about how they have been able to solve scaling problems using a Gossip Protocol.

It was a very good and technical session arguably targeted to existing Consul users/customers that wanted to fine tune their Consul deployments at scale.

Nomad and Next-generation Application Architectures (Armon Adgar)

Armon starts to define the role of the scheduler (broadly).

There are a couple of roles that HashiCorp took in mind when building Nomad: developers (or Nomad consumers) and infrastructure teams (or Nomad operators).

Similarly, to Terraform, Nomad is declarative (not imperative). Nomad will know how to do things without you needing to tell it.

The goal for Nomad was never to build an end-to-end platform but rather to build a tool that would do the scheduling and bring in other HashiCorp (or third party) tools to compose a platform. This after all has always been the HashiCorp spirit of building a single tool that solves a particular problem.

Monolith applications have intrinsic application complexity. Micro-services applications have intrinsic operational complexity. Frameworks has helped with monoliths much like schedulers are helping now with micro-services.

Schedulers introduce abstractions that helps with service composition.

Armon talks about the “Dispatch” jobs in Nomad (HashiCorp’s FaaS).

Evolving Your Infrastructure with Terraform (Nicki Watt)

Nicki is the CTO @ OpenCredo.

There is no right or wrong way of doing things with Terraform. It really depends on your situation and scenario.

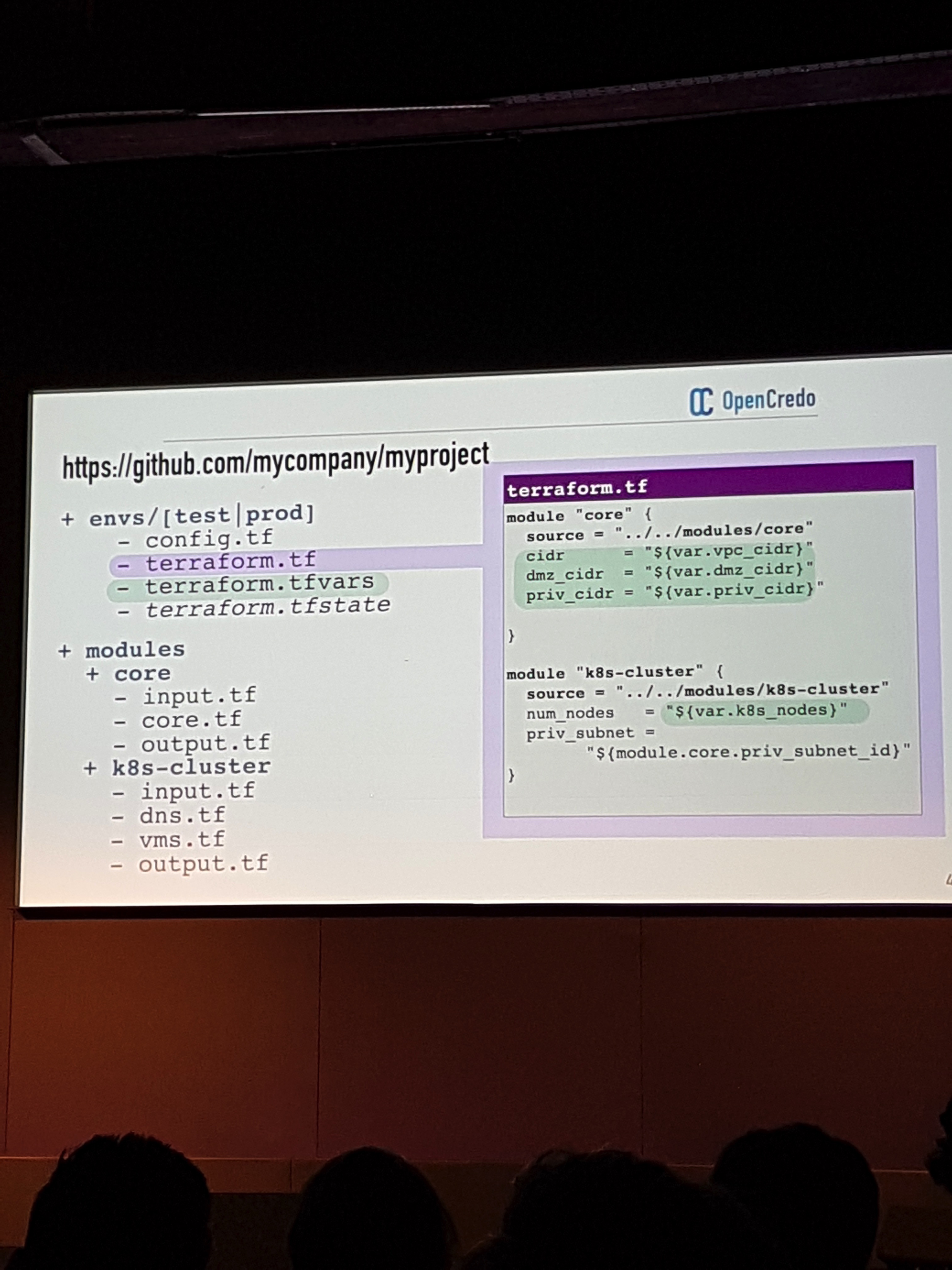

The first example Nicki talks about is a customer that has used Terraform to deploy infrastructure on AWS to setup Kubernetes.

She walks through the various stages of maturity that customers find themselves in. They usually start with hard coded values inside a single configuration file. Then they start using variables and applying them to parametrized configuration files.

Customers then move onto pattern where you usually have a main terraform configuration file which is composed with reusable and composable modules.

Each module should have very clearly identified inputs and outputs.

The next phase is nested modules (base modules embedded into logical modules).

The last phase is to treat subcomponents of the setup (i.e. Core Infra, RDS, K8s cluster) as totally independent modules. This way you manage these components independently hence limiting the possibility of making a change (e.g. in a variable) that can affect the entire setup.

Now that you moved to this “distributed” stage of independent components and modules, you need to orchestrate what needs to be run first etc. Different people solve this problem in different ways (from README files that guide you through what you need to manually do all the way to DIY orchestration tools going through some off-the-shelf tools such as Jenkins).

This was really an awesome session! Very practical and very down on earth!

Operational Maturity with HashiCorp (James Rasell and Iain Gray)

This is a customer talk.

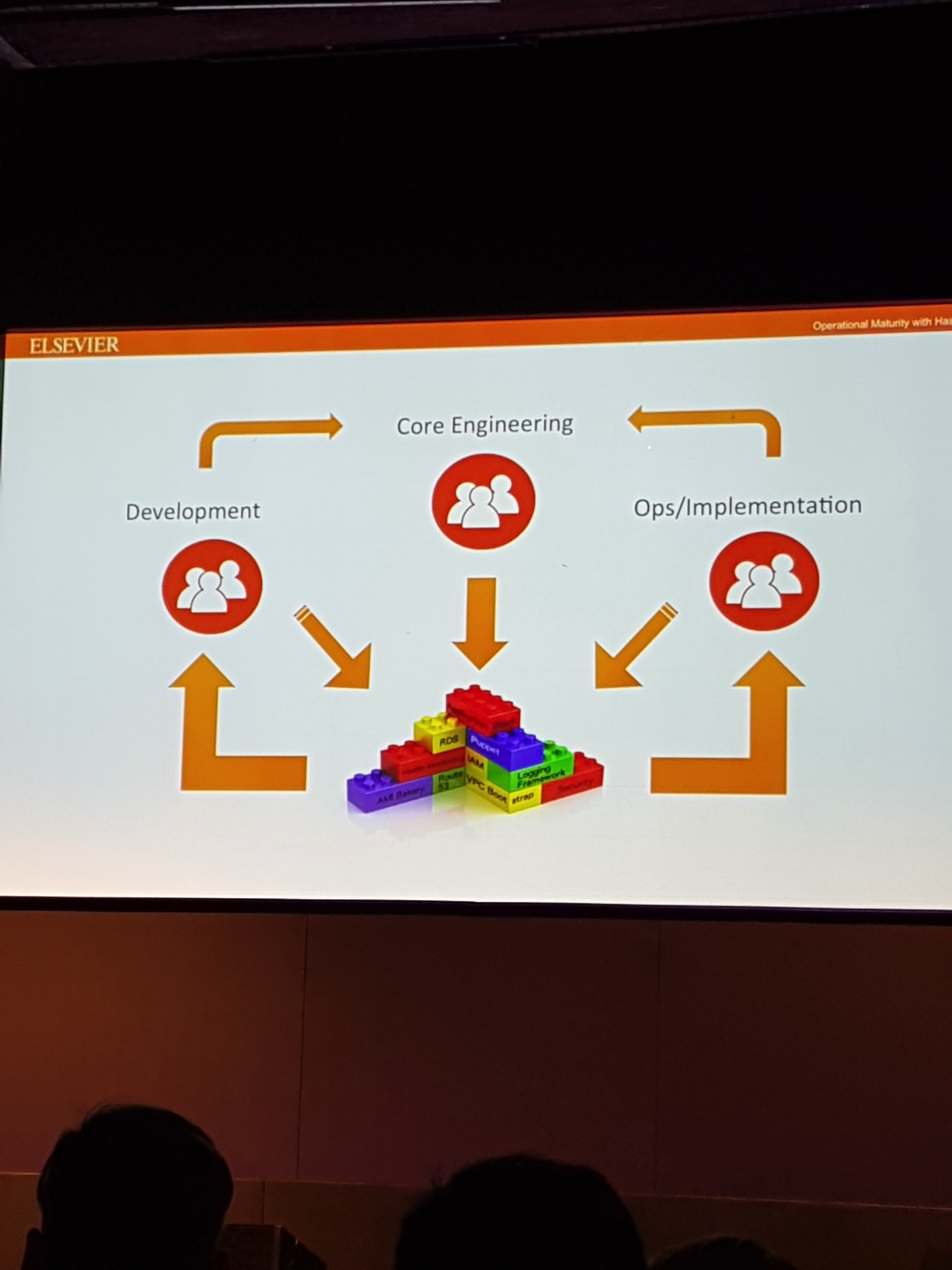

Elsevier has an AWS first. They have roughly 40 development teams (each with 2-3 AWS accounts and each account has 1-6 VPCs).

Very hard to manage manually at this scale. Elsevier has established a practice inside the company to streamline and optimize this infrastructure deployments (they call this practice “operational maturity”). This is the charter of the Core Engineering team.

The “operational maturity” team has 5 pillars:

- Infrastructure governance (base infrastructure consistency across all accounts). They have achieved this via a modular Terraform approach (essentially a catalog of company standard TF modules developers re-use).

- Release deployment governance

- Configuration management (everything is under source control)

- Security governance (“AMI bakery” that produces secured AMIs and make it available to developers)

- Health monitoring

They chose Terraform because:

– it had a low barrier to entry

– it was cloud agnostic

– codified with version control

Elsevier suggests that in the future they may want to use Terraform Enterprise. Which underlines the difficulties of monetizing open source software. They are apparently extracting a great deal of value from Terraform but HashiCorp is making 0 out of it.

Code to Current Account: a Tour of the Monzo Infrastructure (Simon Vans-Colina and Matt Heath)

Enough said. They are running (almost) entirely on free software (with the exception of a small system that allows communications among banks). I assume this implies they are not using any HashiCorp Enterprise pay-for products.

Monzo went through some technology “trial and fail” such as:

- from Mesos to Kubernetes

- from RabbitMQ to Linkerd

- from AWS Cloud Formation to Terraform

They, right now, have roughly 250 services. They all communicate with each other over http.

They use Linkerd for inter-services communication. Matt suggests that Linkerd integrates with Consul (if you use Consul).

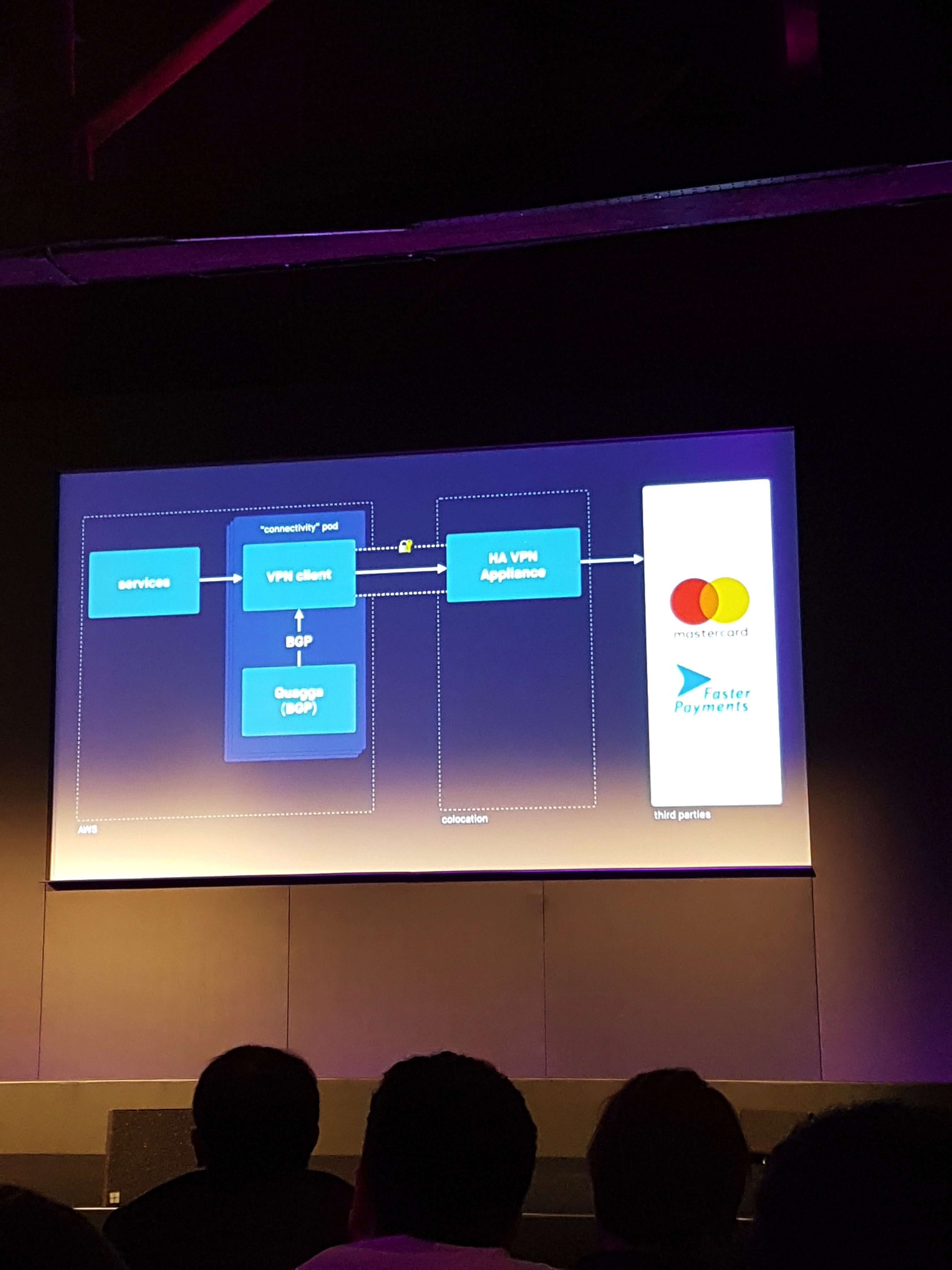

They found they had to integrate with some banking systems (e.g. faster payments) via on-prem infrastructure (Matt: “these services do not provide an API, they rather provide a physical fiber connection”). They appear to be using on-prem capacity mostly as a proxy into AWS.

Terraform Introduction training

The day after the event I attended the one day “Terraform Introduction” training. This was a mix of lecture and practical exercises. The mix was fair and overall the training wasn’t bad (albeit some of the lecture was very basic and redundant with what I already knew about Terraform).

The practical side of it guides you through deploying instances on AWS, using modules, variables and Terraform Enterprise towards the end.

I would advise to take this specific training only if you are very new to Terraform given that it assumes you know nothing. If you already used Terraform in one way or another it may be too basic for you.