The (Upside Down) Economics of Public Clouds

In the last few years we collectively spent an outrageous amount of time talking and arguing about the “per VM” cost of running workloads on-prem Vs. running workloads in a public cloud.

While doing so, we forgot to take into account the elephant in the room: the (upside down) economics of (some) public clouds.

The general vendor approach to “monetization strategy”

Let’s take a step back.

We live in a time where we tend to associate raw compute capacity as “commodity” and software / services that extract value from said capacity as “added-value”.

The cynics will read this as: there is no money/profits to be made on the former while there is a lot of money/profits to be made out of the latter.

There are many examples where we have seen this in action (at different levels in the stack):

- x86 hardware is commodity while software that runs on top of it adds value.

- hypervisors are a commodity while the management software that controls them adds value.

- etc etc

(Almost) every vendor in this industry is trying to stay “top of the stack” to gain a control point, commoditizing what’s underneath it and, ultimately, making money out of this approach.

An example of this is as near as the blog I posted just before this one.

You can (over)simplify this concept with a simple rule of thumb: “money follows the value”.

Or, in other words, you can say that “users are willing to pay for what they actually need and value”.

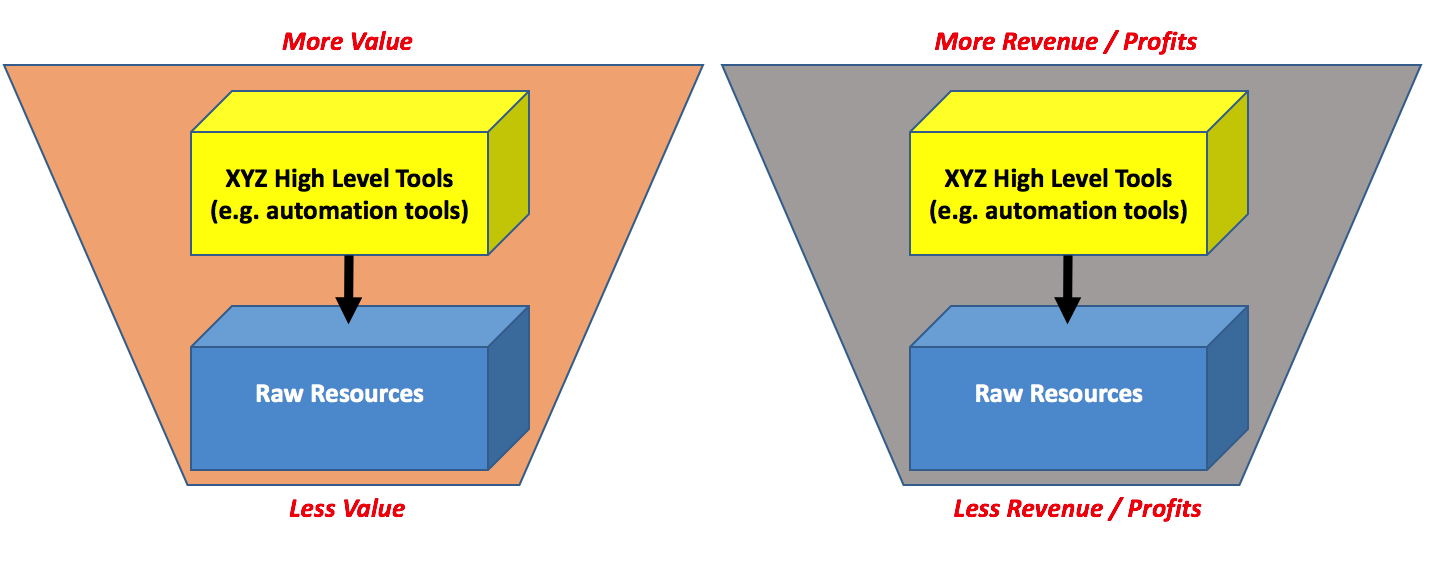

This is a picture of this concept:

A public cloud approach to “monetization strategy”

Now that we have discussed a broad view of the general industry approach to “making money”, let’s turn to AWS, the leader in the public cloud space. While I am going to focus on AWS these same concepts may apply to other public cloud providers (e.g. Microsoft Azure) albeit admittedly not to all of them.

If AWS was to adopt the same monetization strategy, they would have to give away raw compute resources while charging for their higher level services. Very simple.

That isn’t what they are doing though.

While AWS doesn’t provide any figures, cloud pundits speculate that the vast majority of AWS revenue (and profits!) come from the usual suspects: EC2 instances, EBS storage, S3 (and a few others). You can’t go more “raw resources” than this!

However, it is well understood that, while part of the AWS value comes from their Pay-As-You-Go business model, a large chunk of the AWS value is in its automation (and generally speaking their higher level) services.

Now let’s have a look at the higher level (e.g. automation) services AWS offers and their respective pricing policy:

- AWS CloudFormation: “...There is no additional charge for AWS CloudFormation. You pay for AWS resources (such as Amazon EC2 instances, Elastic Load Balancing load balancers, etc.) created using AWS CloudFormation in the same manner as if you created them manually...”

- AWS Elastic Beanstalk"...There is no additional charge for AWS Elastic Beanstalk. You pay for AWS resources (e.g. EC2 instances or S3 buckets) you create to store and run your application...”

- AWS ECS: "...There is no additional charge for Amazon EC2 Container Service. You pay for AWS resources (e.g. EC2 instances or EBS volumes) you create to store and run your application...”

- AWS OpsWorks: “...There is no additional charge for OpsWorks. You pay for AWS resources (e.g. EC2 instances, EBS volumes, Elastic IP addresses) created using OpsWorks in the same manner as if you created them manually..."

- AWS CodeDeploy: "...There is no additional charge for code deployments to Amazon EC2 instances through AWS CodeDeploy..."

- ...

There are more services whose pricing schema is similar but I think you get the point I am trying to make without me boring you more than this.

A “regular industry vendor” (applying standard and common industry best practices) would probably package all of these tools together, call them a suite and try to make a whole lot of money out of those.

That’s not what AWS is doing. AWS is implementing a slightly different business model where they give away the tools that drive consumption of resources to charge for those resources being consumed.

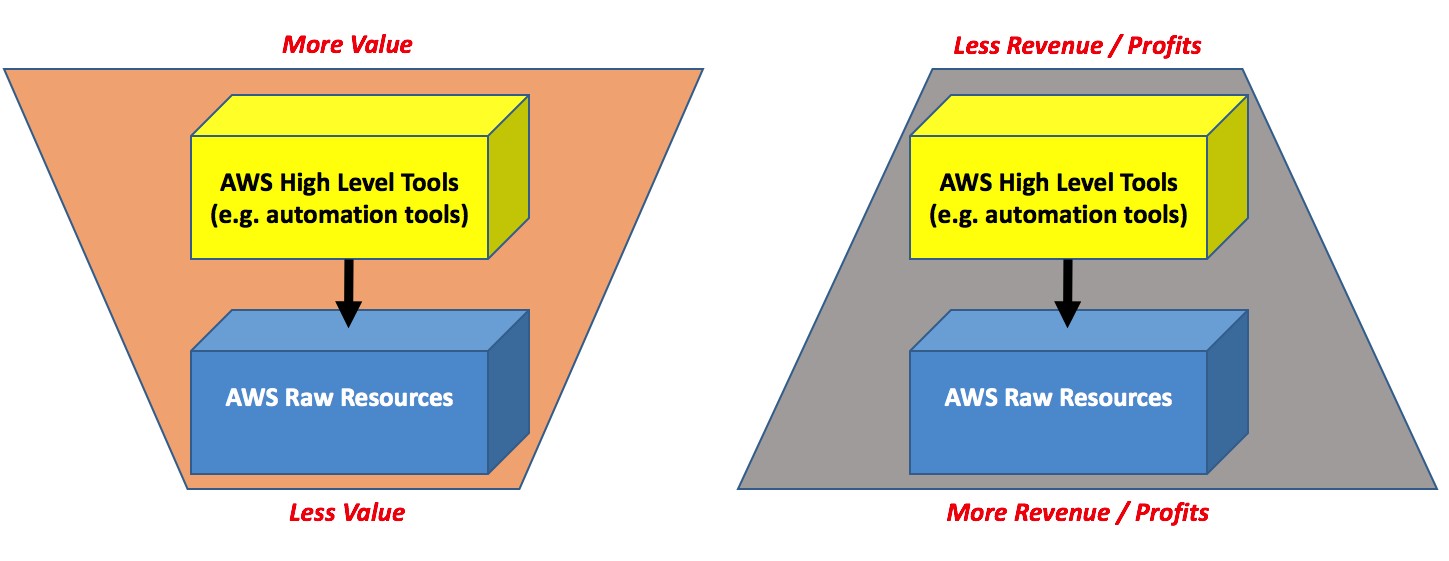

Here it is the concept in a picture:

No but… wait! Not all of the AWS (higher level) services are free

That is correct. There are a few add-value services that AWS has decided to monetize on top of standard instances. A few that come to mind are AWS RDS, AWS Elasticache, AWS EMR.

These are all services that run off EC2 but they have a separate pricing based on instance types (where the idea is that you pay for the base instance type + the value provided by that particular service running on it).

There is also a completely different set of managed services, that isn’t easy to correlate to discrete EC2 instances and that are charged separately. Examples of such services are AWS Lambda and AWS DynamoDB.

These may be considered exceptions to the main point of this article (i.e. AWS is giving away higher level value to charge for resources consumed).

However, if you try to zoom out and get the big picture, you will see that AWS is applying the very same (upside down) approach, just at a different level.

These days AWS is releasing Lumberyard that they describe, in their own words, as “cross-platform, 3D game engine for you to create the highest-quality games…”.

Not surprisingly this is a free tool and if you read the FAQ it says:

"(Q) Which AWS services are available in Cloud Canvas?

(A) Cloud Canvas enables you to use DynamoDB, S3, Cognito, SQS, SNS, and Lambda via the Lumberyard Flow Graph visual scripting tool”

Here they are again. Similar to how they were giving away higher level EC2 instance orchestration services (and making money out of the orchestrated objects), now they are giving away industry verticals (as a piece of software in the case of Lumberyard) while charging for the lower level resources users of such vertical may need to use on AWS.

Same approach, different level.

Lather, rinse, repeat.

What are the ramifications of this approach?

There are many things that are interesting to watch because of this particular monetization strategy that public clouds (and specifically AWS) are leveraging.

First and foremost, trying to make a like for like comparison between different public clouds and/or between a public cloud and a private cloud is a titanic effort and one that has a million variables. Yes, you could waste a month to cross all of your naïve data and determine that running a VM here is 3 cents cheaper then running a VM there…. but then if it will cost you $2M to procure and/or operationalize (here) a higher level service that you could get for free (there) … what on earth are we talking about?

I have never been a big fan of TCO studies, but in this context they are (less than) useless to start with.

Another interesting ramification of this (upside down) monetization strategy is that it is very difficult to track services consumption and consumption patterns. Sticking with AWS, sure EC2 is king and it’s probably (one of their) most successful services. You could (and should) assume that that is commodity but what would it take to beat EC2 if you were a competitor trying to steal users from AWS? Are their users using EC2 because they think it’s awesome? Or are they using EC2 because they think (e.g.) CloudFormation is awesome and EC2 is just, incidentally, what these tools happen to leverage for compute capacity? These users may have found cheaper raw resources elsewhere but they, perhaps, fell in love with (e.g.) Elastic Beanstalk?

It is obvious that AWS has all of these data internally but, from the outside, it’s difficult to see and understand the what and why of a particular consumption pattern of raw cloud resources.

Conclusions

This short blog post points out how public clouds (and AWS in particular) are changing the economic rules of the game by using many techniques.

The industry, in the last few years, has focused the majority of its attention on the “pay per use” business model of public clouds. This model may or may not appeal end-users (depending on their own consumption patterns).

Public clouds are changing the game by also completely reverting the paradigm of what they charge for, thus making price comparisons very difficult.

As my UK friends would say, mind the gap.

Massimo.