vCHS Meets vCO (and Boris Becomes a Hero!)

There are so many things I'd like to show and talk about here that I wanted to dumb down the title as much as possible.

The trigger

This all started (a long time ago) by realizing that with AWS you can deploy an EC2 instance and SSH/RDP into it in a matter of a couple of minutes with just a few clicks. With vCHS (and vCD for that matter) you can deploy an instance in pretty much the same time, but then you have to configure separately the Edge Gateway to get the proper NAT and Firewall rules in place (see this blog post for vCD networking configuration samples if you are new to vCD). While VMware is continuously improving the portal and the user experience I wanted to find a way to address this problem in "consumer space" (i.e. what a user can do without the need for vCHS engineering to improve the service).

If there is one thing that I learned playing with the vCHS APIs is that you can change the behavior of the portal (see the "How to change the computer name of the VM" section in this blog post). So while vCHS doesn't have a wizard that allows you to deploy a VM and customize its surrounding network and security settings all in one, one can build a string of API calls to make that happen in a single transaction. Hold tight.

The excitement (so to speak)

This is where I started. But I didn't stop there.

I wanted to bolt a bit of hybrid buzz on top of this concept. Wouldn't it be awesome if you could execute that multi-calls API transaction from the vSphere web client so that a vSphere admin can deploy an "Internet VM" in vCHS as if it was a local deployment?

Back in December VMware released the vCHS vSphere Client plugin which is awesome, but that plugin cannot be extended nor customized. In addition, as of today, the vCHS vSphere Client plugin can only deploy VMs but it cannot (yet) configure network and security services in vCHS.

Long story short: enter vCenter Orchestrator.

With vCenter Orchestrator I could achieve both things:

-

I could concatenate API calls that would allow, in a single transaction, to deploy a VM and configure the network and security services associated to it.

-

I could expose that transaction in the vSphere client by virtue of the existing vCO and vSphere web client integration.

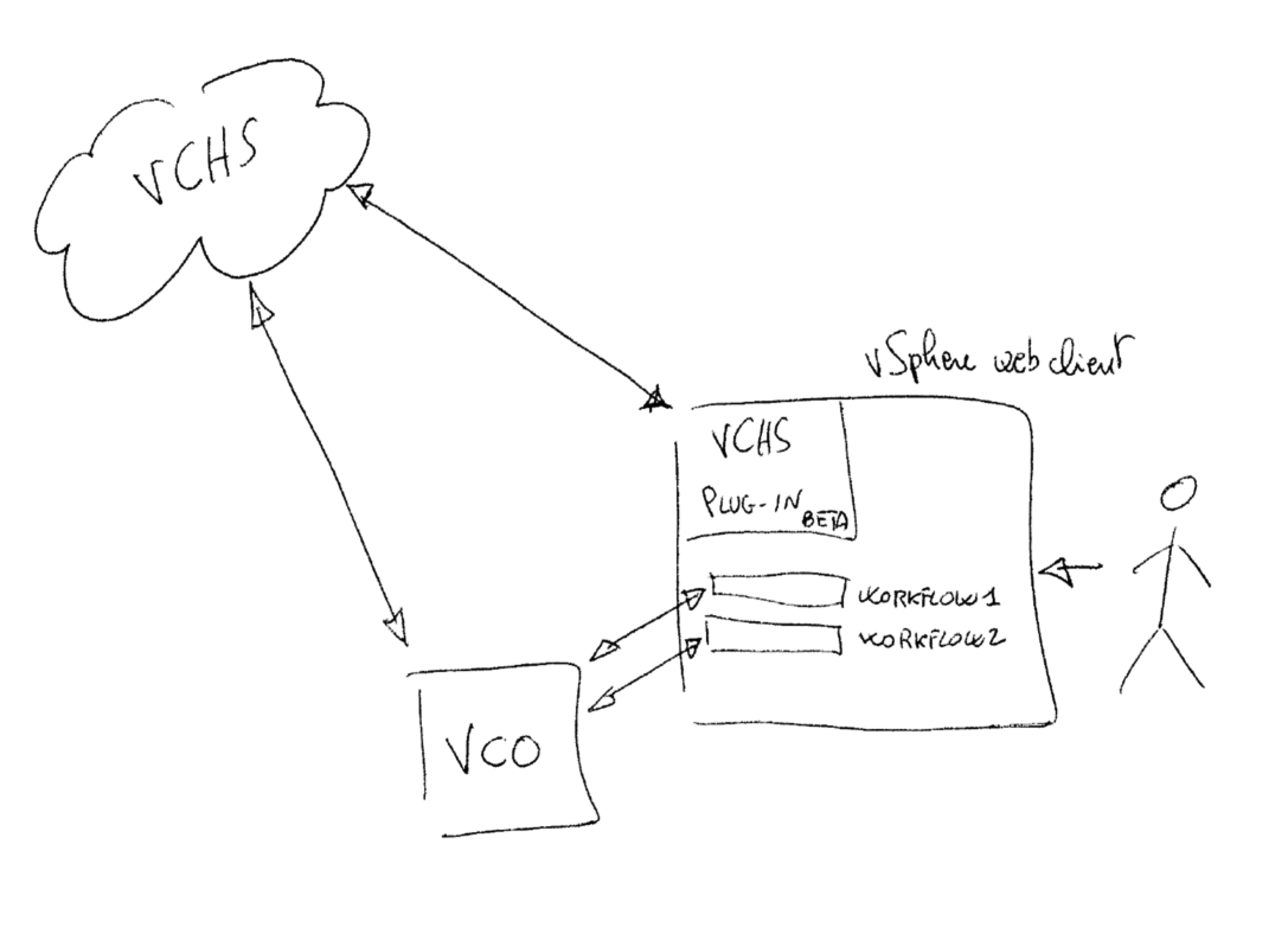

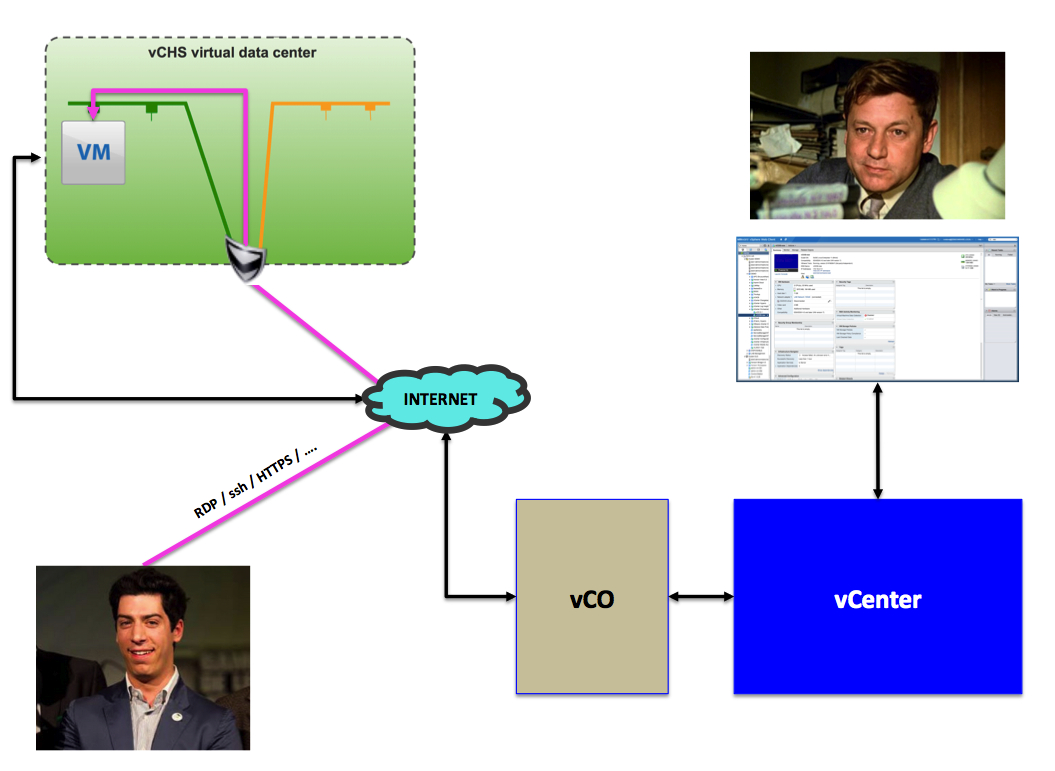

So this was the first sketch of what I had in mind. The journey begins:

The Roadblocks

This journey wasn't a piece of cake. I must admit that.

I am going to focus this blog post on the result, not so much on the "sausage making" aspect of it. But, in complete transparency, I think it is good to touch on a couple of aspects that are particularly problematic.

The first set of roadblocks I found is related to the fact that the vCD plugin for vCO was designed around the assumption that the user is a cloud admin (aka vCloud Director Cloud Admin) and not a cloud tenant (aka vCloud Director Org Admin). Since vCHS consumers are always cloud tenants (even when they subscribe to a Dedicated Cloud), a lot of the out of the box vCD plugin workflows in vCO won't work. Even workflows that are supposed to be available to tenants (e.g. configure an Edge Gateway NAT rule) have been created using API shortcuts that are only available to cloud admins (and not tenants).

I wouldn't define this as "poor design" though. I would define this as a design for a totally different use case compared to what I am trying to achieve here.

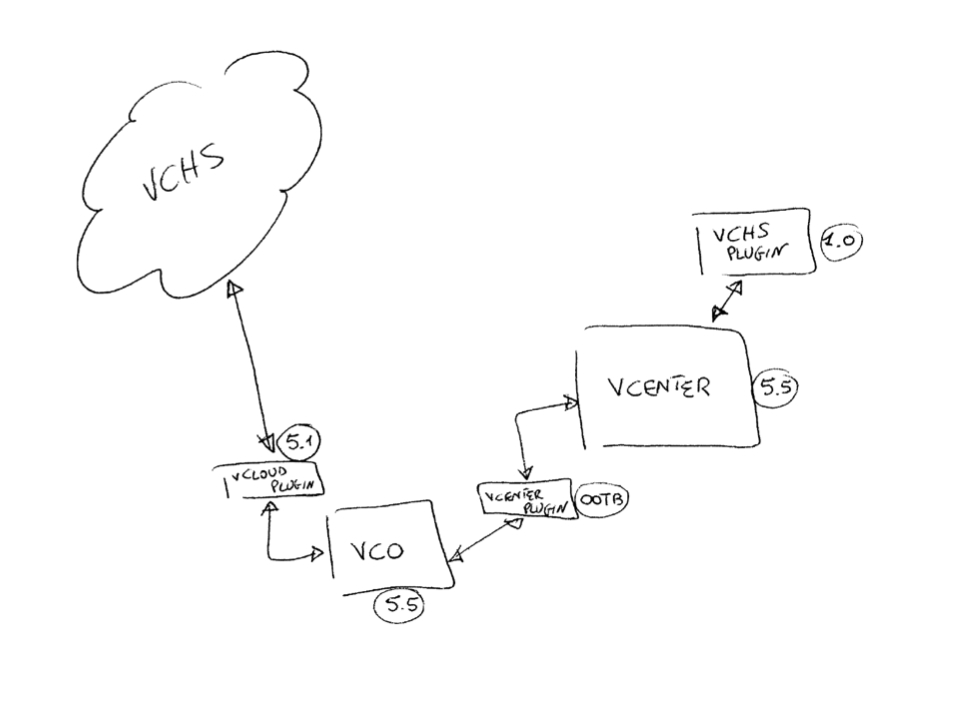

The second roadblock I found ties back to one of my old rants re the cost of building clouds. I have experienced first hand the complexity of aligning a number of different products with different versions and compatibility requirements. This is the second sketch I made on the journey that, while not being detailed and exhaustive, can give you an idea of the problem:

So much for the sausage making part.

Big shout out to Aleksandar Lazarov in the vCO engineering team for fixing on the fly those roadblocks (in the vCD plugin and workflows) that allowed me to build the prototype below. Please note that those fixes haven't been made public so: "kids, don't try this at home" (it won't work with currently shipping code).

The Storyboard (Use Case)

I'll use a storyboard to give you an idea of what sort of scenario this prototype can address. The scenario I am going to describe is one among a million that you can think of where these technologies could apply. It goes like this:

Boris works in the IT department of a big Enterprise shop. He owns vSphere there. Lately he has noticed a surge in casual requests from peers in the IT department (and even outside) for transient VMs that colleagues require to do test, development, products evaluations and so forth.

These people often need to work closely with remote third party partners (that would need to get access to these VMs too). Boris is scratching his head because he has a lot of issues with fulfilling those requests.

-

Boris doesn't have a lot of capacity left in his private vSphere environment. Where is he going to put those VMs?

-

Boris mentioned to the security team how external partners could get access to those VMs sitting on his vSphere infrastructure and they had a good laugh at him and his ask. How can he solve this problem?

-

Boris doesn't have a private cloud project going on. There are talks about it but right now he must manually fulfill those requests.

Boris also thinks that "cloud" is a buzzword but this is another story.

The Solution (Philosophy)

Boris decides to subscribe to vCHS to gain immediate access to additional capacity in an OPEX model.

Boris isn't going to give these internal and external folks access to vCHS directly. Instead Boris will get the request (via whatever channel) and create those VMs using a vCO workflow that he can launch from where he spend 18 hours a day (that is, the vSphere web client).

Boris doesn't have a lot of public IP addresses subscribed with vCHS and wants to keep them to a minimum anyway. He will give users access to those VMs via NAT ports mapping that users will suggest.

Note: in addition to not consuming public IP addresses, this port mapping also increases (exponentially) the security of exposing SSH and/or RDP access (e.g. SSH attacks usually target port 22 and not arbitrary ports you choose when NATting).

For example:

-

User1 will get RDP access to a Windows VM on port 999 of the Edge Gateway IP.

-

User2 will get RDP access to a Windows VM on port 998 of the Edge Gateway IP.

-

User3 will get SSH access to a Linux VM on port 997 of the Edge Gateway IP.

-

User4 will get HTTPS access to a Linux VM on port 996 of the Edge Gateway IP.

-

etc

Essentially, the users requesting the VMs will only need to provide 4 pieces of information to Boris:

-

The VM template they want (Windows, Linux etc)

-

The name of the VM

-

The external TCP port

-

The internal TCP port

-

An email address to communicate the provisioning happened (and how to connect to the VM)

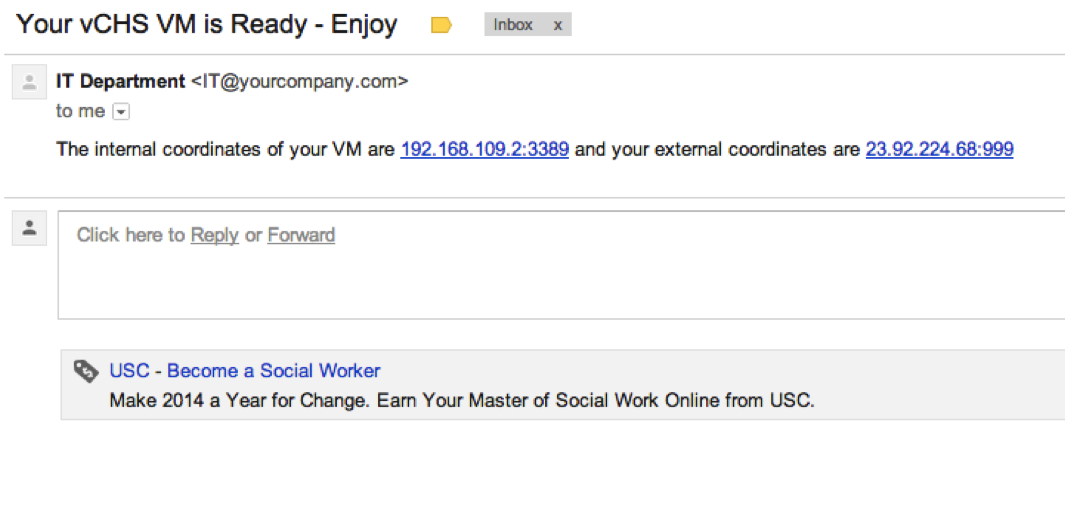

In return the user will get an email with a summary of the public IP and external port he/she will need to use to connect to the VM.

The Solution (Architecture and Flow)

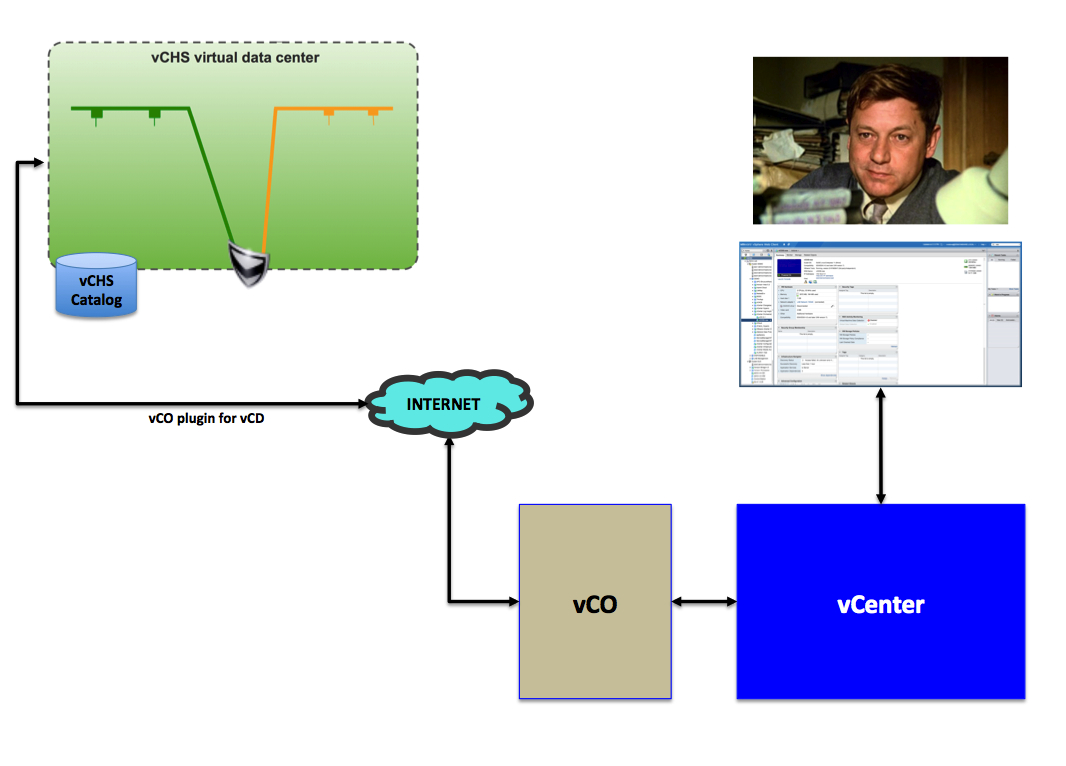

This is what Boris setup to accomplish the above:

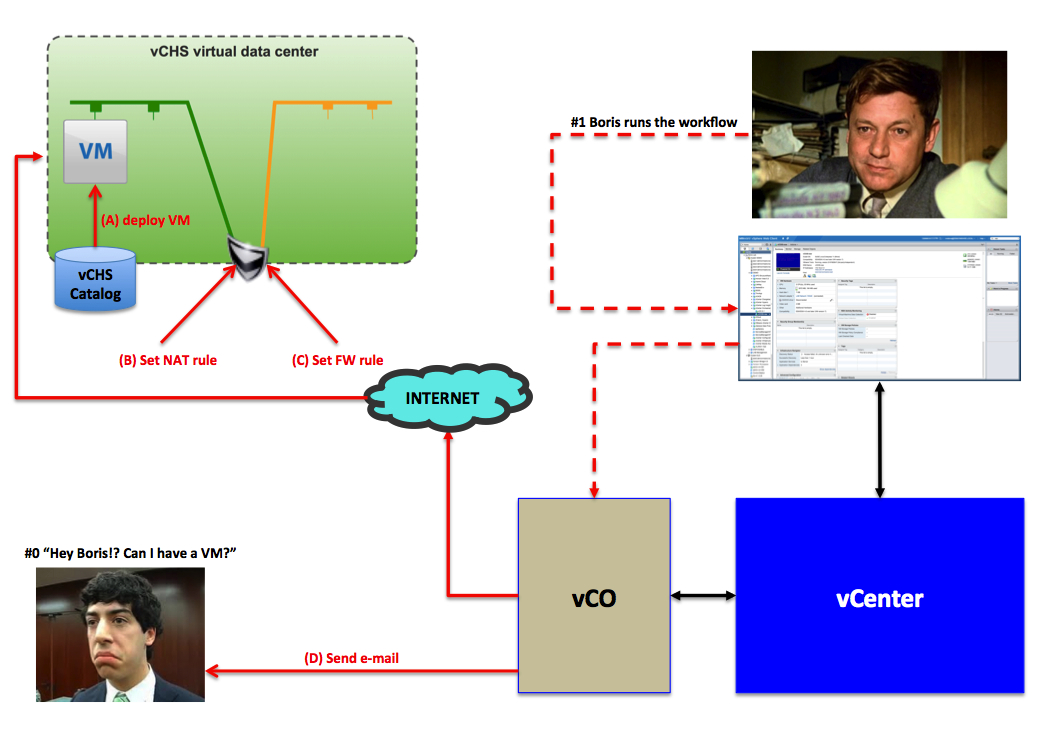

This is what happens when the user calls Boris to get a VM. Boris gets the message (phone, email, chat at the coffee break, whatever) and Boris runs the vCO workflow from within the vSphere web client:

And this is the happy user that consumes his VM per his specifications:

The Solution (Screenshots)

You can appreciate a more complete and detailed overview of the prototype with the video in the section below but these are a few screenshots of how the process looks like.

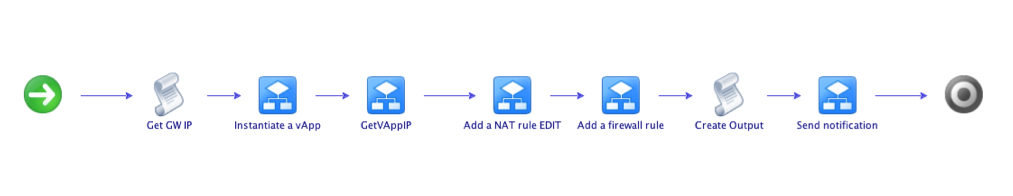

First and foremost, below is a picture of the vCO workflow. The heart of what I have been doing.

Another shout out is due here. This would have not been possible without the help of Andrea Siviero. We had so much fun building the LiquidDC utility that I thought he would have had more fun with vCO (that he masters). Thanks Andrea.

This is, at a high level, what the workflow does:

-

It programmatically reads the public IP of the Gateway [Get GW IP]

-

It deploys a vApp [Instantiate a vApp]

-

It programmatically reads the IP of the VM in the vApp deployed above [GetVAppIP]

-

It configures a NAT rule [Add a NAT rule EDITED]

-

It configures a FW rule [Add a firewall rule]

-

It creates a string of parameters (IPs and Ports) used to inform the user on how to connect to the VM [Create Output]

-

It sends an email to the user with the info above [Send notification]

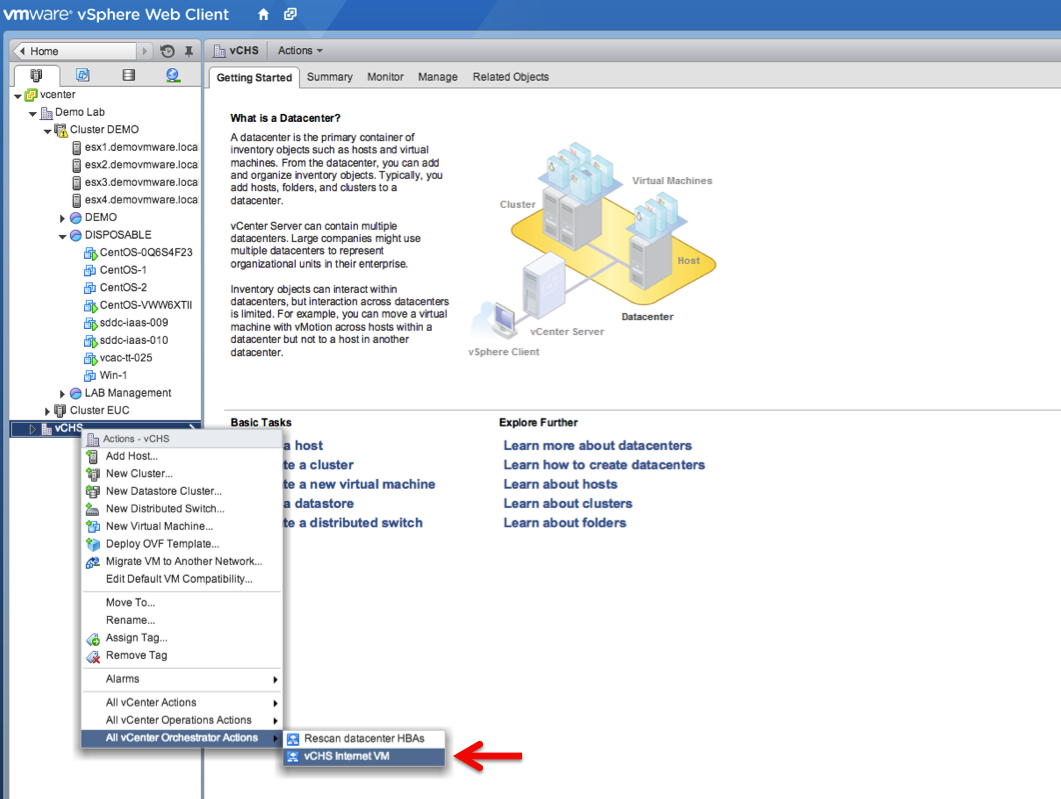

Boris will call this workflow from the vSphere client. Note that Boris has created a ghost Datacenter in the vCenter hierarchy. This is pure "marketing".

The vCO workflow is associated to any Datacenter object (so it would work if you right-click on DemoLab as well in the picture below) but Boris thought it was cool doing it this way. Mind you.

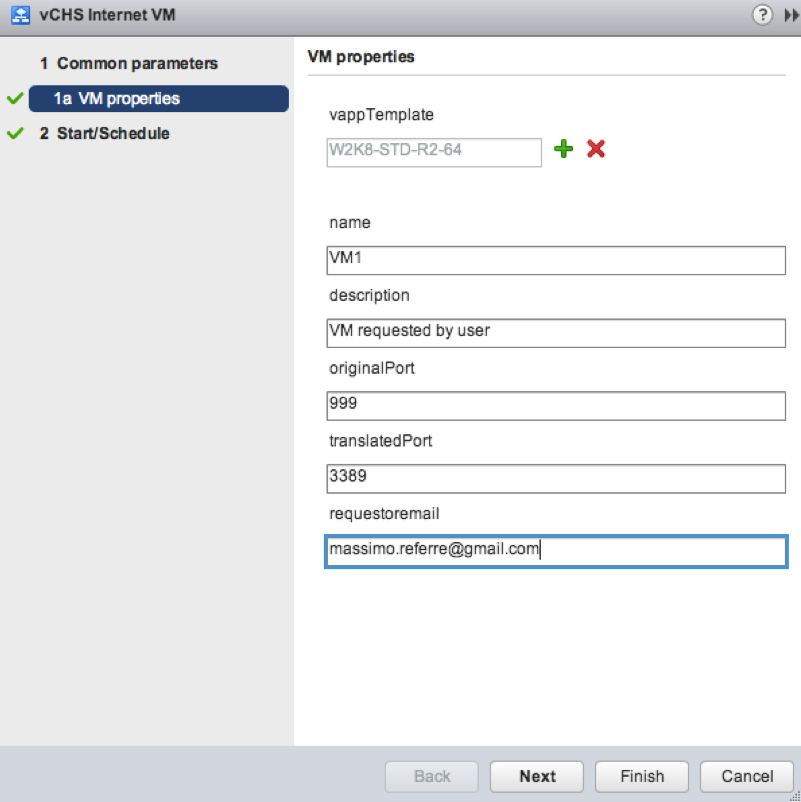

Boris will fill the workflow inputs with the parameters that a user (e.g. Massimo) asked him to use.

In particular what happened is that Massimo gave Boris a call asking begging for a Windows 2008 VM he would like to RDP into using port 999. Boris is then filling the input parameters of the workflow as follows:

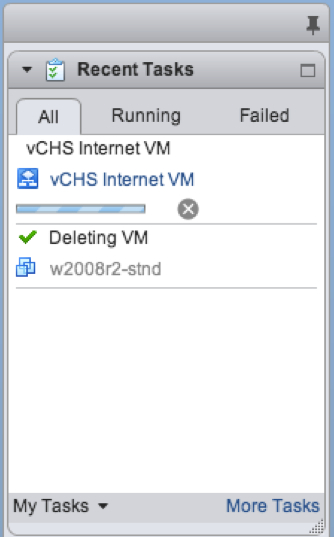

Boris has an idea of how the workflow is doing because the vSphere web client tracks vCO workflows execution. At this point Boris can forget about Massimo. Boris can continue working on his stuff and go home sooner rather than later.

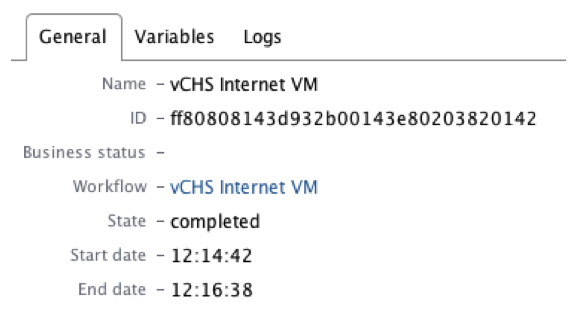

According to the vCO client, the workflow took 116 seconds to run:

The last step of the workflow sends an email to Massimo. In fact Massimo has received the following email:

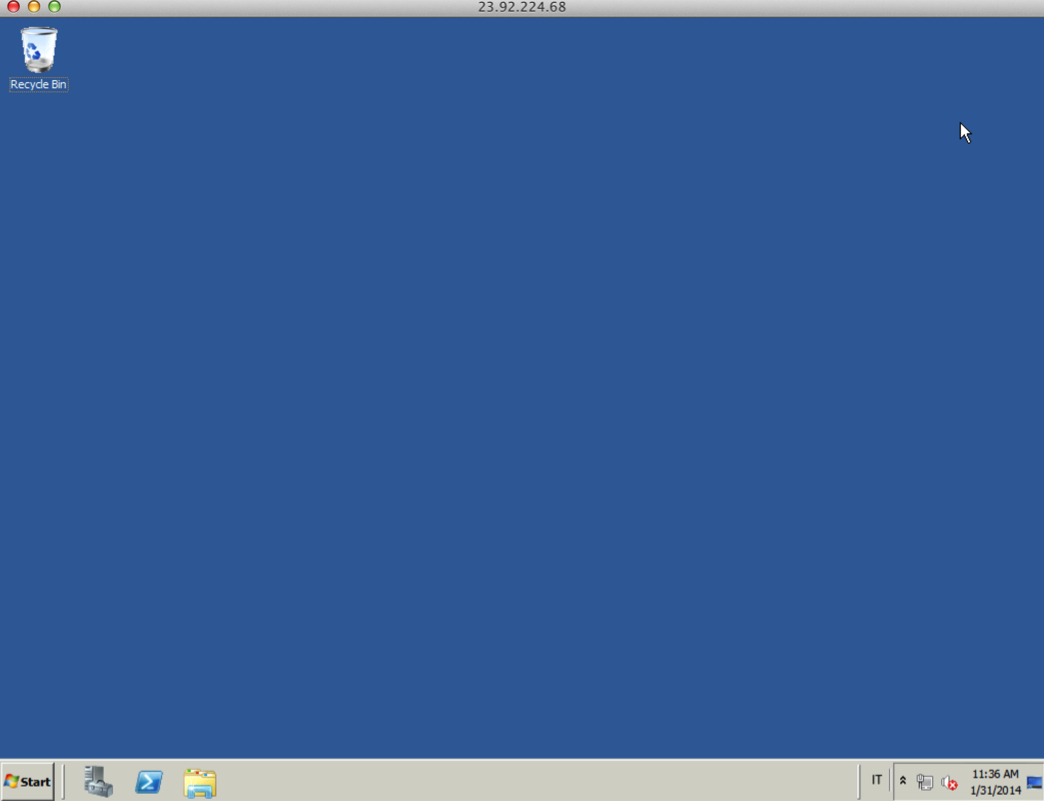

Massimo, with tears in his eyes, will then just open an RDP client on his Mac and will type:

And boom! Boris is his hero now!

The Solution (Live)

Conclusions

Deploying an Internet reachable VM on demand in 116 seconds from the vSphere client.

[Assuming we can get rid of all the problems I described at the beginning of the post in terms of products version mismatches] building a solution like this takes no longer than 1 or 2 days.

While this is very far from being a true hybrid cloud, I have seen "cloud" projects taking 2 years and 2M$, while still delivering half of what you have seen here in 10 minutes.

Say what you want. I think this is amazing.

The workflow I built is pretty basic (no error control or anything). If you want to do serious things you may spend more time to make it more solid.

You can even think of re-using more complex (and advanced) vApp deployment workflows like Christophe's example here and complementing them with other workflow components (FW and NAT rules).

I am fairly sure you can think of at least another couple of hundreds use cases you can use this integration for.

Interesting times ahead for vSphere admins. You can all be heroes in your organization.

Massimo.