Cisco UCS: there is something I am still missing

On Monday 16th Cisco unveiled its Unified Computing System (UCS). A few days ago I have been briefed by some local Cisco guys about the product (err, the architecture as they stressed). I assume that people reading this post know what Cisco is doing and are familiar with the announcement. In a nutshell they have announced a new thing which is a mix of hardware (primarily) and software that is comprised of the following:

- their Unified Fabric technology (as it can be found in other products like the Nexus family of switches)

- their new Blade technology

- their Management technology (which is an OEM and supposedly customized version of the BMC BladeLogic software)

Consider there is not a lot of information available at the moment so most of the discussions are based on preliminary - and poor - initial documentation. This picture explodes the pieces and it's one of the few diagrams that is being shared by Cisco at this stage:

Never mind I work for IBM and many of my colleagues see this as a potential threat to our server hardware business (which I am sure it is the case). In the final analysis I am a technology geek and that's how I run this personal blog. What I write here is my own unbiased (believe it or not) personal opinion.

I must admit I am fascinated by what Cisco is trying to achieve here. Ideally it sounds like a very compelling solution and something that anyone should be seriously valuating for virtualization deployments. Having this said, as for all things in life - none excluded - there are pros and cons. I am not going to spend time to talk about the pros as they are obvious and Cisco is certainly going to explain those to you in the details. These include, for example, the potential benefits of the Unified Fabric, which are enormous. I believe end-users reading this blog would be better served, at this point, by someone that starts to highlight the (potential) challenges of designing and implementing such a vision and architecture. This is done to balance the flow of "pros" you will be flooded with. Note this is nothing new on this blog: when VMware announced VMware 3i I wrote an article on the misleading marketing information that were associated to it; similarly I have done a reality check for VMware Site Recovery Manager to underline its deficiencies rather than magnifying its excellences (that's what the VMware marketing is paid for).

This is exactly what I'd like to do here with this new article: I'd like to underline the challenges that Cisco is facing. However I don't want to do that from a competitor blade vendor perspective (that's what the Dell/IBM/HP marketing organizations are for), but rather from a VMware virtualization expert (vExpert) perspective based on feedbacks from the field and various customers' projects I have been involved in now and in the past.

(Physically) Unified Fabric? No, Divide et Impera!

Cisco is trying to capture a potential convergence in the datacenter. This is a process that started early in the 21st century when the major servers vendors started to ship blades form factors: those blade chassis in fact integrate both Ethernet and Fibre Channel switches as well as compute nodes (i.e. blade servers). This wasn't an easy thing to do in organizations with very strong vertical specializations (and politics!) in the data center. That's why we still see an exaggerated number of "pass-through" technologies being used on blade chassis that basically externalize the thousands of Ethernet and Fibre Channel ports of each blade. This diminishes the intrinsic value of the blade technologies, however it allows to connect the blades to the legacy infrastructure switches. Most of the time in fact this is not done for technical reasons but merely for political reasons: "The server guys are responsible for servers, that's it; the network guys have their own infrastructure and that's (physically) separated from servers....". This is what usually happens with big organizations. I have been through that many times.

Having this said, I support the Cisco message: what these big accounts are doing is very inefficient and there is space for a huge optimization if they could possibly get the internal political issues resolved. However I think this is one of the problems Cisco is going to face in promoting their Unified Fabric technologies. Well, in reality this situation is exacerbated by the fact that we are talking about a convergence of IP and Storage networks, so even more politics involved.

Unified Fabric, Weak security?

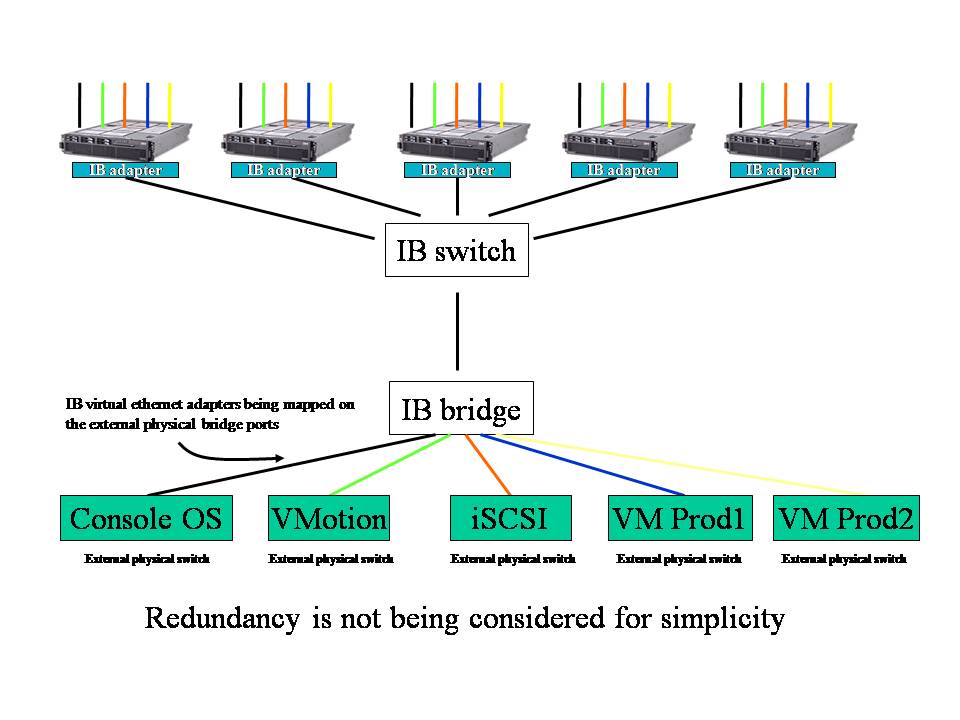

Once we get passed the physical consolidation concerns I have discussed above and the customers have accepted to position the switches in a non conventional location (i.e. closer to the servers than to the infrastructure) Cisco might face another concern related to security. As a background, this will of consolidating and reducing the cabling complexity that each VMware ESX server has associated is nothing new. I have discussed this very exact topic back in 2007 in the article "Infiniband Vs 10Gbit Ethernet... with an eye on virtualization". As you might see from the picture in the post (which I am attaching hereafter for your convenience) InfiniBand was supposed to deliver the same concept of I/O virtualization that is being evangelized by Cisco with their Unified Fabric:

This is very similar to the latest Cisco Nexus value proposition (hence to this UCS announcement as it's based on the Nexus core technology). No matter if it's InfiniBand or 10Gbit Unified Fabric, the biggest problem with this layout and architecture - as reported by customers and VMware network security experts in the forums threads linked below - is that each ESX server has a number of network security zones that best practices would require to keep separate from each other. Many customers achieve this creating network security zones (i.e. for the ConsoleOS, VMotion, iSCSI, VMs etc) by means of physically different network adapters that connect to physically separated network switches. For these customers VLANs and PortGroups technologies are not usually a viable option as they don't implement and guarantee the same level of security and separation they need. In the picture above the criticality lies in the fact that these physically and logically separated network segments need to collapse into a single Bridge/Switch for the whole I/O virtualization to work (be it InfiniBand or Cisco Unified Fabric).

Last but not least consider this discussion is multidimensional. Not only Cisco is trying to unify all different IP segments on the same wire - as already discussed- but they are also trying to unify both IP traffic and Fibre Channel traffic on the same wire (by means of a new technology called FCoE or Fibre Channel over Ethernet). Obviously this additional dimension adds even more potential security concerns than "simply" collapsing heterogeneous network security zones. There have been a number of interesting discussions on the VMware forum that I highly encourage you to read if you are interested in the matter. You can find them here and here.

This is going to be another challenge for Cisco.

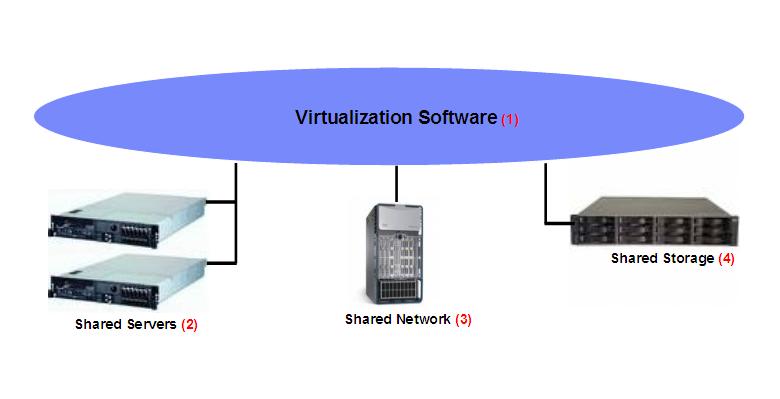

Unified Computing? More like Partially Unified Computing

I don't really get the Cisco message here. I have already talked about how I see the technology trends in this industry; in a nutshell what's happening is that data centers are being transformed from vertical silos of servers, storage that support (statically) applications into pools of physical resources that could be used when they are needed. You can read more about these trends in this other article I wrote. The picture in the original post doesn't call out one important element of the architecture which is the network: I didn't call it out because it was obviously there but let's try to refine that diagram to draw the complete picture of the elements that comprise a virtualized data center.

A properly designed and innovative x86 virtualized data center requires these 4 distinct elements:

- A Shared Server infrastructure

- A Shared Network infrastructure

- A Shared Storage infrastructure

- The Virtualization software (which is the glue that ties together all these components)

Note: in a traditional virtual infrastructure the storage network (be it fibre or Ethernet) is physically separated from the IP network (which is typically Ethernet). In the context of the Unified Fabric there is a single network (based on 10Gbit technologies) that carries both storage and IP. This doesn't really change the idea of the diagram above; it actually enforces the message meaning that the Shared Network is also shared from a "protocol being carried" perspective.

One of the challenges customers have today is that these 4 elements are really managed and operated by different vertical (and specific) management tools: you have to use vCenter to manage VMware, you have to use the Server tools to manage the Shared Servers infrastructure, you have to use specific tools to manage and operate the Network infrastructure and ultimately you have to use specific GUIs to manage the shared disk space. This is not, by the way, a negative thing per se because it allows a customer to switch from one vendor to another at any level they want, thus allowing them to not be locked-in. This is a concept that is historically at the very basis of any x86 deployments and one of the most important aspects that determined - and still determines - the success of this platform.

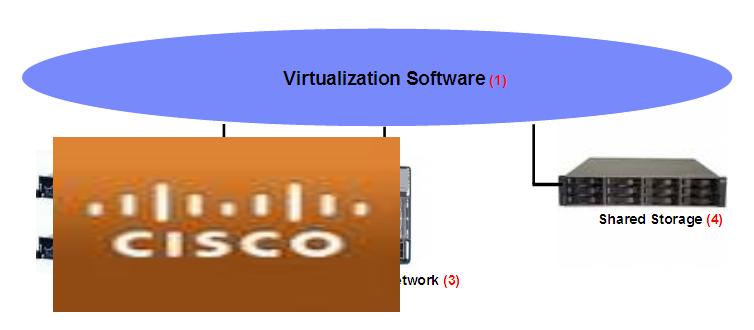

The point I am trying to make is that Cisco "Unified" with their offering only two of these four elements. Namely Servers and Network:

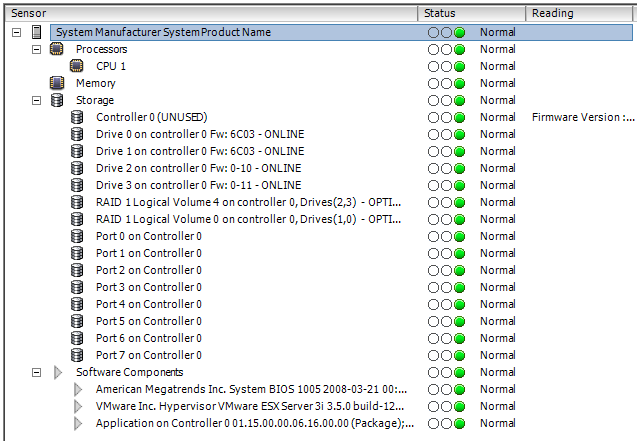

What is this going to mean for customers from a "unification" perspective? Very little I think. Consider also that the servers themselves, frankly speaking, are probably the most commodity thing of all four from a management perspective simply because management standardization (such as IPMI and BMC) is allowing third parties to build into their own products an x86 management layer. A typical example of this is, funny enough, the VMware effort to create a CIM-based interface to manage standard x86 servers (this implementation first appeared in ESXi and it's now available in the standard ESX version). This is an example of this concept:

I certainly don't want to downplay the challenges associated to managing a server farm but, if you ask me, extending an existing tool to add functionalities that properly manage an x86 servers deployment is not something that should be under scrutiny for a technology Nobel prize. So to speak. Ironically VMware is "unifying" Virtualization with servers management whereas Cisco is "unifying" Network with servers management. Not the holistic unification it's being discussed in the marketing announcements though.

Similarly to the "unified" management concept above, building a brand new x86 blade is a relatively easy task compared to building a brand new Storage subsystem or compared to building a brand new Virtualization software infrastructure element (ask Microsoft). So I am starting to wonder why they have chosen to (partially) "unify" starting from the easiest of the four elements. Here I am assuming that the innovative characteristics of their blades are either easily achievable by long standing tier 1 servers vendors (Dell, HP, IBM, SUN) or are not strictly necessary as of today: The speculated 500+GB of memory support per Cisco blade seems cool but I am challenging the need for something like this given the current well known rule of thumbs for sizing ESX hosts. Sure Nehalem will change these numbers but even assuming doubling the amount of RAM required for a 2S/8Core system we are far far away from the 500+GB Cisco specs.

More so Cisco has clearly stated that they want to leave the Software Virtualization as well as the Shared Storage elements open. I don't want to provide more details here as I am not sure about the level of confidentiality associated to the info I have but the key point is that they don't have a strategy that calls for a single Virtualization vendor nor a single Storage vendor. Enough for now. And this again leads me to think what sort of "unification" this is all about. What I have learned basically is that you can buy UCS and use, now or in the future, your storage vendor of choice - with the management framework that comes with it - as well as your virtualization of choice - again with the management framework that comes with it. You have to do this with all the benefits and challenges that end-users experience today in aggregating and integrating different vendors to create the ultimate virtualized infrastructure.

Don't get me wrong. I am a fan of this Unified Fabric concept and I hope it will take off as it will solve many of the enterprise customers challenges associated to the management of the distributed infrastructure. There is lots of information available on the web, as I said, on the benefits of implementing this highly consolidated and "intelligent" fabric. This is from Chad Sakac (with EMC) and it discusses some of these benefits, for example.

What I am questioning is this Cisco move to extend their value proposition from the Unified Fabric into a market (x86 blades) that isn't really adding any additional benefit to their unification story. Reading through Chad's excellent post I can't really depict what is the uniqueness of doing something like what he describes, using alternative components such as Dell / HP / IBM / Sun servers and Dell / EMC / HP /IBM / NetApp / Sun storage all tied together with the Cisco Nexus technology which remains the real Cisco value add in this context. That's what I am missing.

That's the question I have asked during the session a few days ago: what's in - for the customers- if they use a Cisco UCS infrastructure compared to an IBM BladeCenter + Cisco Nexus infrastructure? Granted Nexus switches for the IBM BladeCenter do not exist today, this is a hypothetical question. Sure they have this "integrated management" framework but what's the value in it if what it does is simply managing a subset of the entire infrastructure? Customers will still be forced to deal with a number of vertical management pieces to operate the infrastructure end-to-end.

I am missing it unless there is some sort of grand plan behind the scenes to make the EMC and Cisco pair "more tied" (whatever that means). How about an "EMCisco"? I am going to copyright this term: a brief search on the Internet didn't find any result for this term used in the IT context (although apparently there is a DJ called EMCisco). This single IT entity would, in fact, be able to provide an end-to-end infrastructure comprised of virtualization software, network, servers and storage and they would be able to really integrate the whole thing into a single management and operational framework with a potential much deeper integration (other than standard public API's that interconnect the different four elements). The interesting part is that, as I said, the x86 server market - and its surroundings - is literally modular and no single customer that I know would be willing to be locked-in in such a way (unless there are compelling reasons to do so - which I am not ruling out).

The bottom line is that, if I was malicious, I would be led to think that today Cisco is more interested in getting a slice of the 30B+ US$ x86 server market - on top of what they can do with their Unified Fabric solutions - through the development and integration of the most commodity piece of all the four elements. I can easily see what's in for Cisco: easy additional money. I can't really see, so far, what's in for customers.

I'll let Cisco give you the bright side of their new UCS platform. My role here was to show you the dark side of it (someone has to).

Massimo.