Hyper-V Server R2 on BladeCenter S Tutorial

My good friend at Microsoft, Giorgio Malusardi, noticed my post "Enterprise Virtualization in a Box" which was essentially an example of how to create a BladeCenter-contained VMware-enabled data center in a box (including servers, storage and networking). Giorgio challenged me with the task to create something similar using the Hyper-V Server R2 Beta that has just been announced. And I accepted the challenge!

This tutorial is going to document the setup of the environment based on what I have seen and I have done. I will share my point of view of what's going on and the implication this will or might have in the x86 market in another piece.

Microsoft Virtualization Background

For those of you that are missing the Microsoft basics it would be beneficial to set the stage. Right now, Microsoft is shipping the first version of their hypervisor - Hyper-V - by means of two different channels. The first one is as a component (or role) of their Microsoft Windows Server 2008 products. You can enable or disable this role in either a normal (GUI-based) Windows Server 2008 install, or a core (GUI-less) Windows Server 2008 install. Obviously, in order to get Hyper-V, you need to buy a Windows Server 2008 SKU (Hyper-V is included in any 64-bit x86 version of the Standard, Enterprise and Datacenter SKUs). The license rights for guests and included features - such as Failover Clustering technology - are determined by which SKU is purchased.

The second channel is as a free download from the Microsoft web site in a package called Microsoft Hyper-V Server 2008. In a nutshell this is basically a scaled-down version of Windows Server 2008 with the following restrictions and peculiarities:

- It is a core install only (i.e. GUI-less as the only option)

- The only role that it supports - which is enabled by default - is Hyper-V (for example, you can't enable the Failover Clustering role)

- It doesn't include any license for Windows guest OS'es

- It does have a number of artificial limitations in terms of number of CPUs and amount of system memory supported.

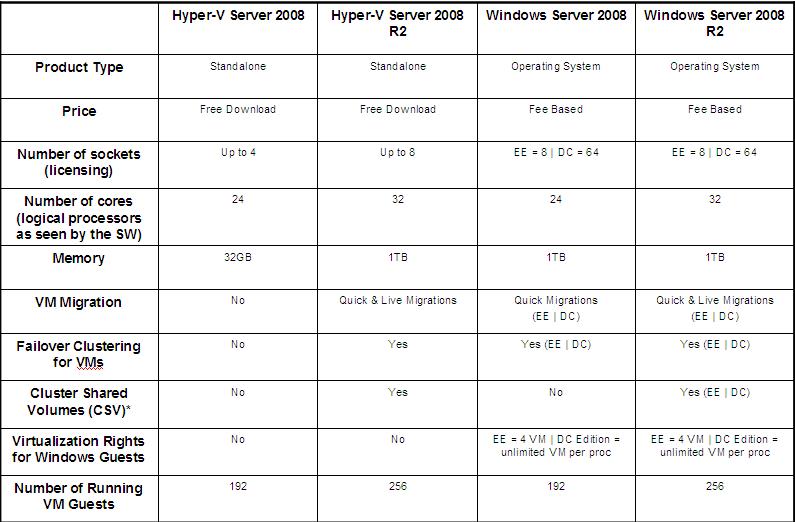

That's what's available as of today. However, Microsoft recently announced the availability of the Beta version of Windows Server 2008 R2 and Hyper-V Server 2008 R2. Both these products will ship the second generation of the Hyper-V hypervisor and are currently scheduled to ship in about a year from now (roughly). With this Beta, Microsoft announced new features and new restrictions for the free package. The following table is a summary of the features in the current and future offerings:

* Cluster Shared Volumes is a technology currently in Beta and will ship along with the second generation of Hyper-V. It allows to use the NTFS file system as if it was a "cluster file system" (ala VMFS so to speak). See below in the document for more information on the CSV technology.

Those of you familiar with the Microsoft Virtualization technology will notice that the Windows Server 2008 R2 SKUs will have similar restrictions and limitations compared to the current releases. This statement obviously doesn't take into account new features introduced with the second generation of the hypervisor (such as Live Migration, for example). As you may have noticed, the biggest delta both in terms of new features and artificial limitations is between the currently shipping Hyper-V Server 2008 (first column from the left) and the future Hyper-V Server 2008 R2 (second column from the left). Among many differences, it's specifically worth to note that the new (free!) product will support:

- 8 sockets (vs. current artificially limited 4)

- 1TB of memory (vs. current artificially limited 32GB)

- Quick and Live Migration (vs. nothing)

- Failover Clustering (vs. nothing)

- Cluster Shared Volumes (vs. nothing)

The Hyper-V Server R2 Based Self-Contained Data Center

Back on track. As I said, the challenge was to replicate the VMware-based setup we have done on the BladeCenter S. We have used the very same hardware setup we have used for the VMware test. While we wanted to test the Hyper-V Server R2 Beta it must be noticed that the currently shipping Hyper-V solution works as well on the BladeCenter S today. This is a (generic) picture of the BladeCenter S chassis:

For this proof of concept, I decided to look at the things from the following perspective:

- I wanted to focus on the Hyper-V Server R2 free product (and not on the general purpose Windows Server 2008 R2 w/ Hyper-V role enabled)

- I wanted to focus on new technologies that will be shipping in the R2 timeframe. This includes CSV, Failover Clustering and Live Migration

- I wanted to focus on what you could do with the future Microsoft free offering. This includes the standard free tools to manage the environment and obviously doesn't include the fee-based products such as Virtual Machine Manager (the current version wouldn't support Hyper-V Server R2 anyway and there is not a "sister Beta version" of VMM to test with the Hyper-V R2 Beta bits).

All this being said we can "replay" what I have done.

Hyper-V Server R2 Nodes Setup

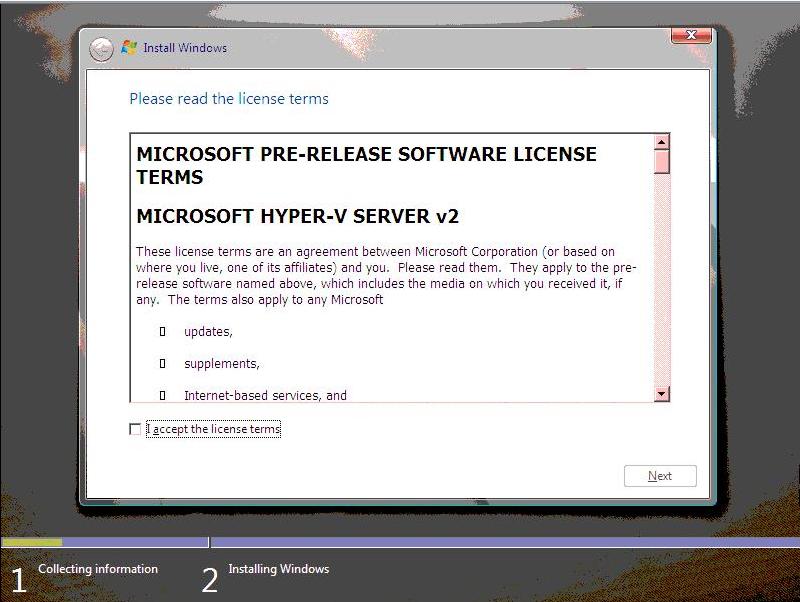

First, I started installing Windows Hyper-V Server R2 on the two local disks of the two blades in the chassis. This is a picture taken from the Management Module of the BladeCenter S during the setup (remote attended install):

I could have set up the basic OS on the shared storage as well as dedicating a small LUN to each of the two blades but I remember there was a registry tweak to apply in the Windows 2003 timeframe to allow a single shared SAS/FC to handle both the C:\ drive as well as the shared storage in a MSCS scenario. I didn't want to get into that level of complexity, especially as it was not one of the main goals I had with this Proof of Concept. Enough to say that I am sure you could get rid of the local disks if you really want to.

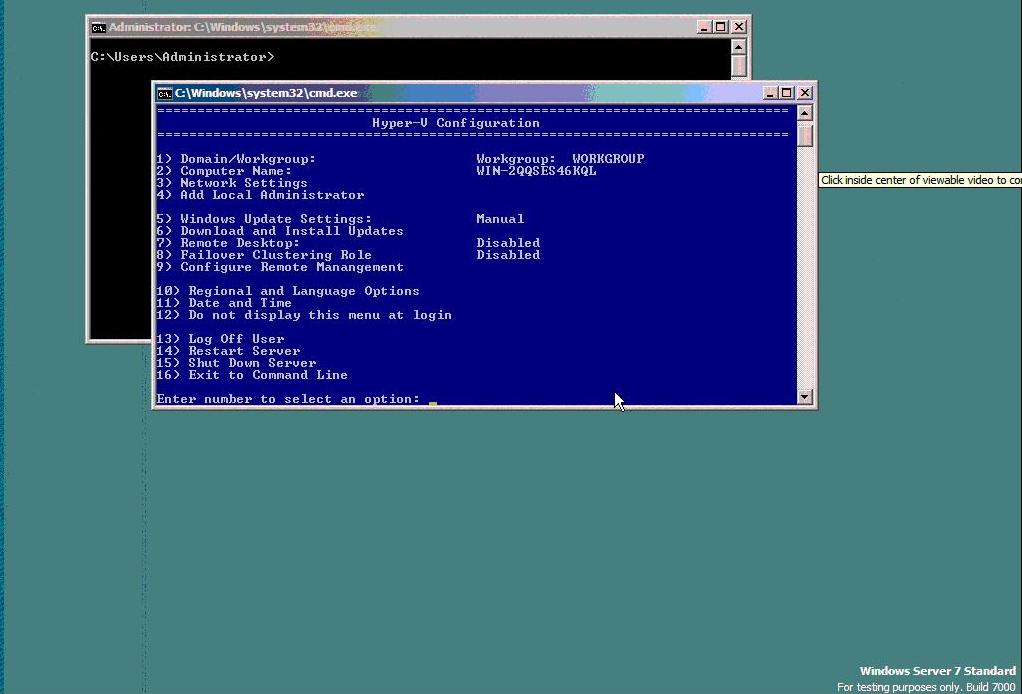

The setup doesn't really ask too many things. Actually nothing. At the next reboot you are asked to change the Administrator password and off you go. This is what you get on a Hyper-V Server R2 Beta local console:

Through the Hyper-V Configuration panel (blue window), I did the following:

- Changed the default Host Name (into HVR2NODO1 and HVR2NODO2)

- Restarted server to apply the computer name settings

- Changed the IP to static addresses (192.168.88.131/132)

- Enabled RDP support

- Configured Remote Management to allow WinRM and relax Firewall settings

- Enabled an extra firewall setting (through the command Netsh advfirewall firewall set rule group=“Remote Volume management” new enable=yes) for managing the disks through a remote MMC snap-in

- Joined the domain (Windows 2008 R2 Domain created on a separate server on the network)

- Added the domain Administrator to the local Administrators group (option 4 of the Hyper-V Configuration tool).

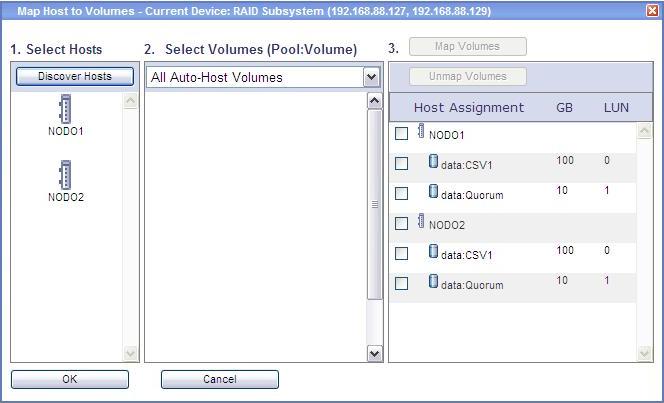

At this point - before enabling Failover Clustering support - I configured both blades to access two shared LUNs created with the IBM Storage Configuration Manager, which is the tool you can use to configure the BladeCenter S integrated storage. This picture shows that a Quorum LUN (10GB) and a CSV LUN (100GB) have been assigned to both blades in the chassis.

A restart of both blades allowed the domain change to take effect as well as the disks to be recognized by the two Hyper-V Server R2 instances (alternatively, a disk rescan would do this job).

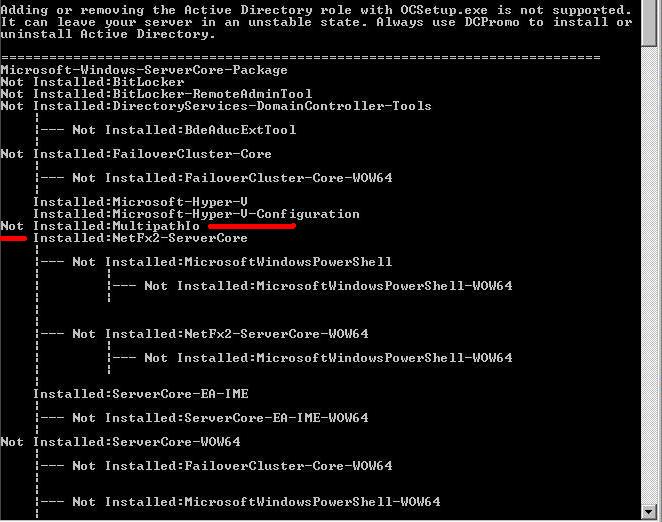

Because of the fully redundant fabric architecture of the BladeCenter S, the two disks we have just configured (Quorum and CSV1) are seen twice by the hypervisor OS because of the dual path that each blade has to get to the disks (this is, by the way, the big plus of this chassis with the integrated storage). A multipath I/O software needs to be installed on the Hyper-V hosts to manage the disks properly. This is done by first enabling Hyper-V-based MPIO support which is not installed by default. The command "oclist" displays all features that have been enabled/disabled on the host as you can see from the picture below:

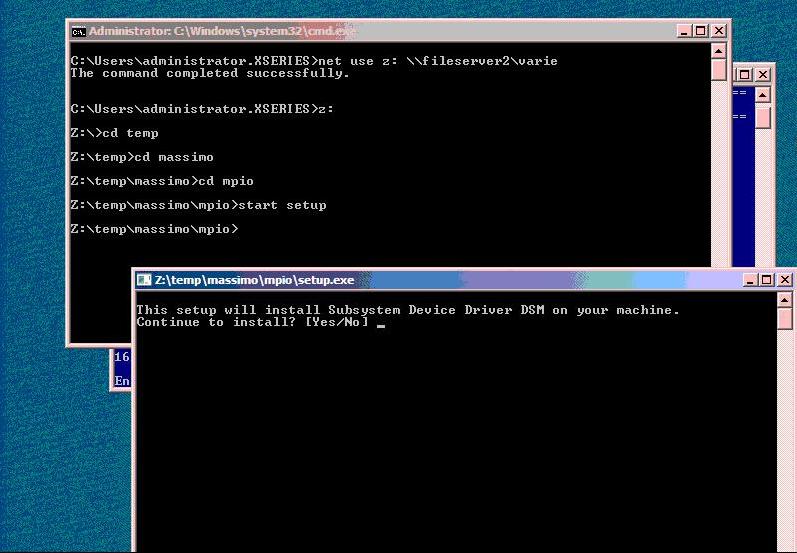

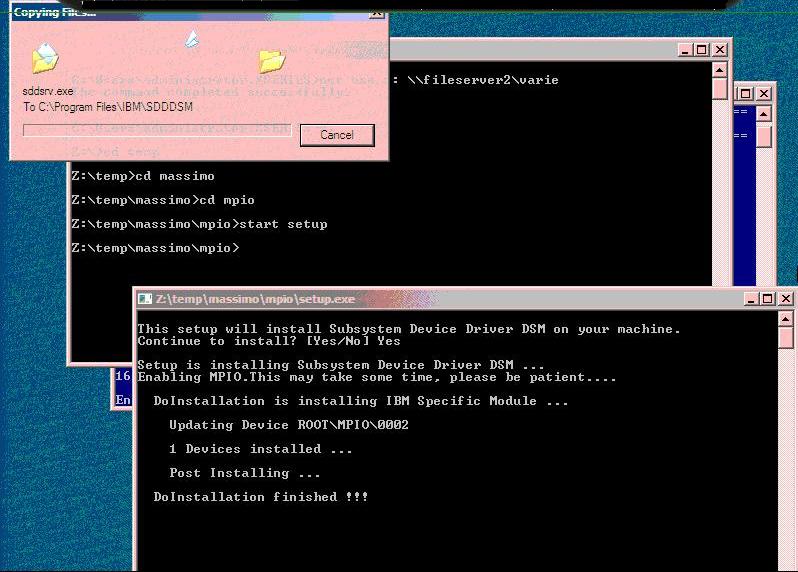

On one of the two hosts, I manually enabled base Microsoft MPIO support (via the command "start /w ocsetup MultipathIo"), but this is not enough. I had to install storage specific multipath software which interacts with the base Microsoft MPIO code. In IBM terms this is called IBM Subsystem Device Driver and can be downloaded off the external website. At the time of this writing, the package is located at this link and it's called the "SDDDSM Package for RSSM" (SDDDSM= Subsystem Device Driver Device Specific Module; RSSM=Raid SAS Switch Module). It's interesting to notice that the package in subject has a typical Windows setup, so I was wondering how it could be installed on a GUI-less system. Well, launching the setup.exe did the job, as you can see in the following pictures.

First impression was that this was not really a GUI-less system, but rather a standard Windows system where explorer.exe was disabled. Well, never mind....

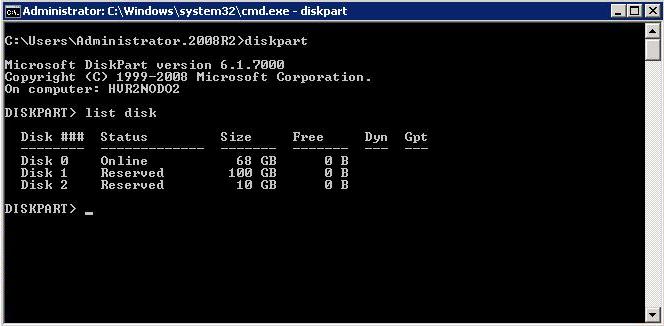

After the reboot the system was up and running again, and the hypervisor correctly reported only two disks being assigned to the blade (the 68GB disk is the local hard drive whereas the 100GB and the 10GB are the two LUNs I created with the Storage Configuration Manager utility).

On the second blade we found out right away that installing the IBM SDD software automatically enabled Windows base MPIO support (if it doesn't just use the command above to enable it).

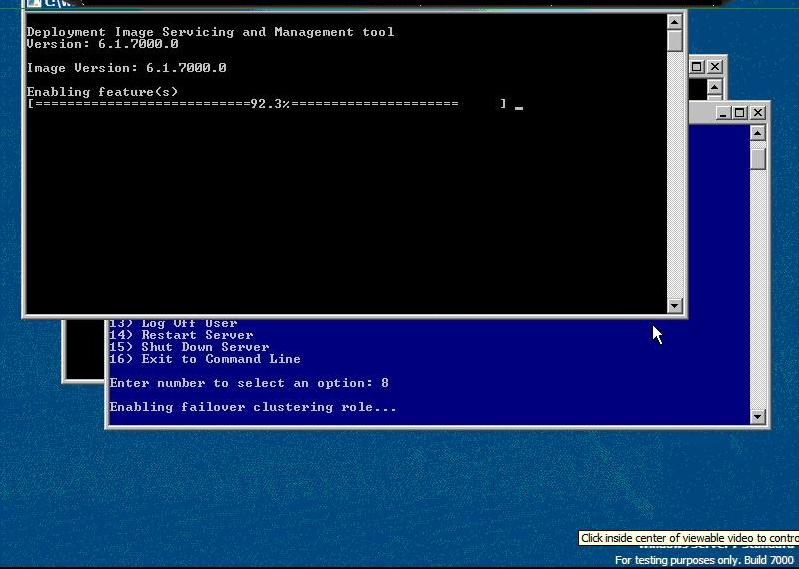

At this point we enabled the Failover Clustering feature on both hosts via option #8 of the Hyper-V Configuration window. This enables the Microsoft Cluster Server code on the two hosts. The picture below shows what happens on the console when you enable this feature. The Cluster itself will be configured later.

This is pretty much it for the Hyper-V hosts setup. This concludes the configuration of the base support that needs to be done on the Hyper-V Server Configuration console. From now on we can do pretty much everything from the Microsoft remote tools.

Hyper-V Server R2 Nodes Configuration from a Remote Workstation

We can switch focus to a Windows 2008 R2 Server that we previously installed and configured to be a Domain Controller for our test bed. Remote administration of the Hyper-V hosts could either be accomplished from this host (after enabling some remote administrative tools that are disabled by default) or from a Vista / Windows7 workstation using the latest RSAT tools available from the Microsoft web site. These tools include advanced Remote Administration MMC Snap-Ins that don't ship with the base client OS and allow to do enhanced tasks such as Live Migration. The latest release of these tools (in beta) can be downloaded here.

If you use the workstation it must be in the same domain you joined the HyperVR2 hosts to. If it is not in the same domain, extra configuration steps on the Hyper-V servers are required to relax cross-domain security restrictions. Since one of the purposes of this test was to demonstrate how you can remotely manage advanced hypervisor features using free tools, we have created an MMC configuration (that we called "MasterMMC") which includes the following Snap-Ins:

- Remote Disk Management

- Failover Cluster Manager

- Hyper-V Manager.

I have used the Remote Disk Management tool to configure partitions and file systems on the two shared disks on both blades: I have assigned the Quorum LUN the Q: letter and the CSV1 LUN the X: letter on both nodes to prepare for cluster enablement. Initially I had a hard time getting to the Hyper-V nodes via this applet. I eventually managed to get to a stable state where I could manage the disks, but I have had many connection issues ("RPC Server unavailable") that I couldn't nail down to a particular problem. Firewall issues as well as bugs in the code (which didn't refresh the pane properly for which I had to close and re-open the MasterMMC) might be potential causes.

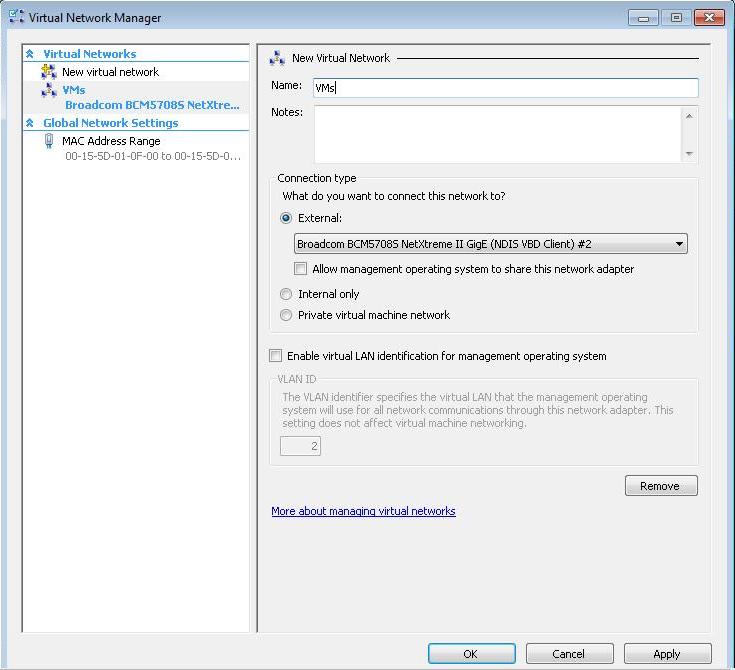

The Hyper-V Manager Snap-In was more straightforward. The only thing I have done here is assigning the second Gigabit adapter on the blade to a VirtualSwitch (called VMs in the screenshot below) that I have defined on both Hyper-V nodes. The first NIC (which I have configured with a static IP address at the beginning of the setup) remains assigned/dedicated to the parent partition.

This is the "network" (aka VirtualSwitch) to which you will connect the guests to get physical network access.

Notice that the BladeCenter S supports blades with up to 4 NICs configured. For this test only two NICs have been configured on each blade. Remember, Hyper-V currently does not allow NIC teaming at the hypervisor level (i.e. assigning more NICs to the same VirtualSwitch). Microsoft advises to use third-party NIC software to create bonds of network adapters and assign the resulting "bonded NIC" to the VirtualSwitch. It's not clear whether Hyper-V R2 is going to change this when they ship the gold code.

The next step is to configure the cluster across the nodes. This is not really Hyper-V Server specific, as the procedure is pretty similar to what you would do on a Windows 2008 Enterprise Server. It involves validating the hardware setup first with the built-in utility and then configuring the cluster properties (clustername, IP address etc).

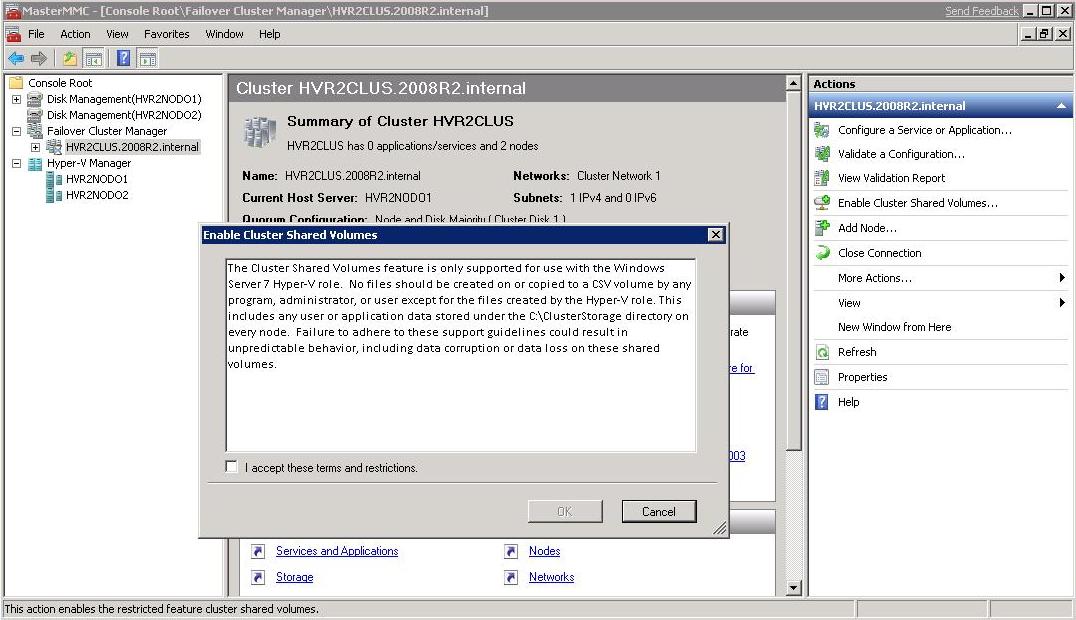

Next, I enabled Cluster Shared Volumes. Those of you that are familiar with Hyper-V and Failover Clustering know that in order to manage a Guest as a single entity (i.e. independently "Quick Migrating" a VM from one host to another) the VM needs to be created on a dedicated shared LUN. This is, by the way, the configuration Microsoft usually advises. This has a number of implications in that you could easily run out of drive letters in the cluster (this can, however, be by-passed using specific mounting techniques), but more importantly it introduces a management overhead: you need to create a LUN for each VM you need to deploy, rather than leveraging a BIG shared LUN cluster-wise (like VMware VMFS allows you to do). That is what CSVs are all about: they provide a "cluster file system"-like environment where you can run a number of different guests on different hosts pointing to the same shared LUN. In fact, it's not by chance that I have assigned the blades a 10GB disk to be used as a dedicated Quorum, as well as a unique 100GB CSV1 LUN to be used concurrently as a shared repository to host multiple VMs. This is obviously a new and big benefit since the current Microsoft Cluster Server architecture is such that if a node owns and can access a LUN the other host in the cluster is inhibited from accessing it (at least until the group containing the LUN fails and the cluster changes its ownership).

The picture below shows the disclaimer about CSV: they can only be used to host virtual machines in a Hyper-V R2 environment! This means they can't be used in a general purpose Windows Server 2008 Microsoft Failover Clustering scenario.

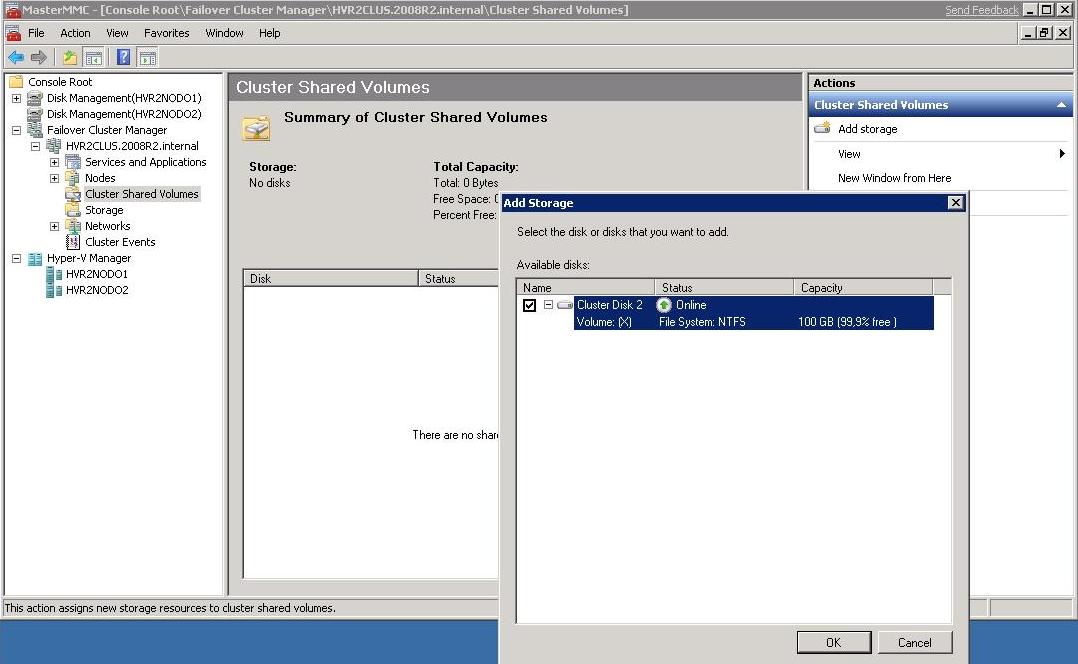

The cluster configuration wizard asks me which volumes I want to enable: CSV1 is the only remaining partition I have (the Q: drive has already been used for the Quorum):

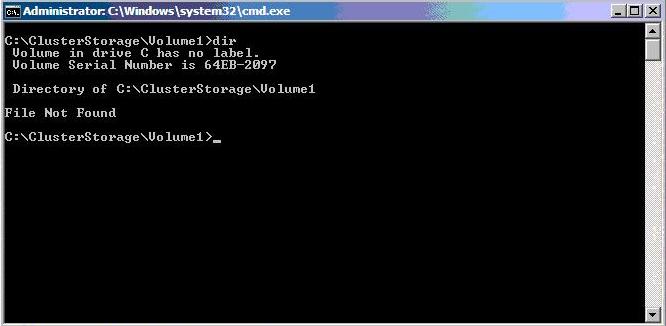

Once the CSV has been enabled, on each cluster node a new directory structure appears. The default is "C:\ClusterStorage\Volume1"

This is a "virtual pointer" that refers to the CSV1 LUN and it shows up on each of the two blades and describes a sort of common/shared name space that both blades can access at the same time. This concept applies to virtual machines only and the usage of CSV cannot be extended to a general purpose cluster file system at the moment.

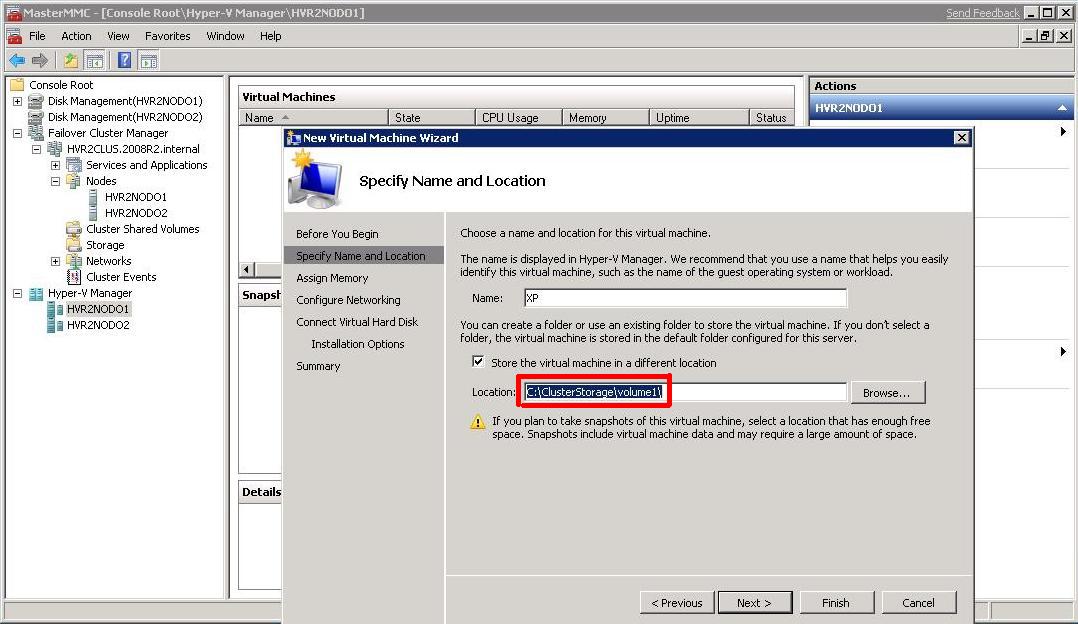

Now that we have a cluster set up and a CSV volume available, we are going to create a virtual machine. We point to the Hyper-V Manager Snap-In in the MasterMMC window and we configure the VM to be hosted on the CSVs explicitly choosing the common local name space that identifies the CSV on the Storage Area Network:

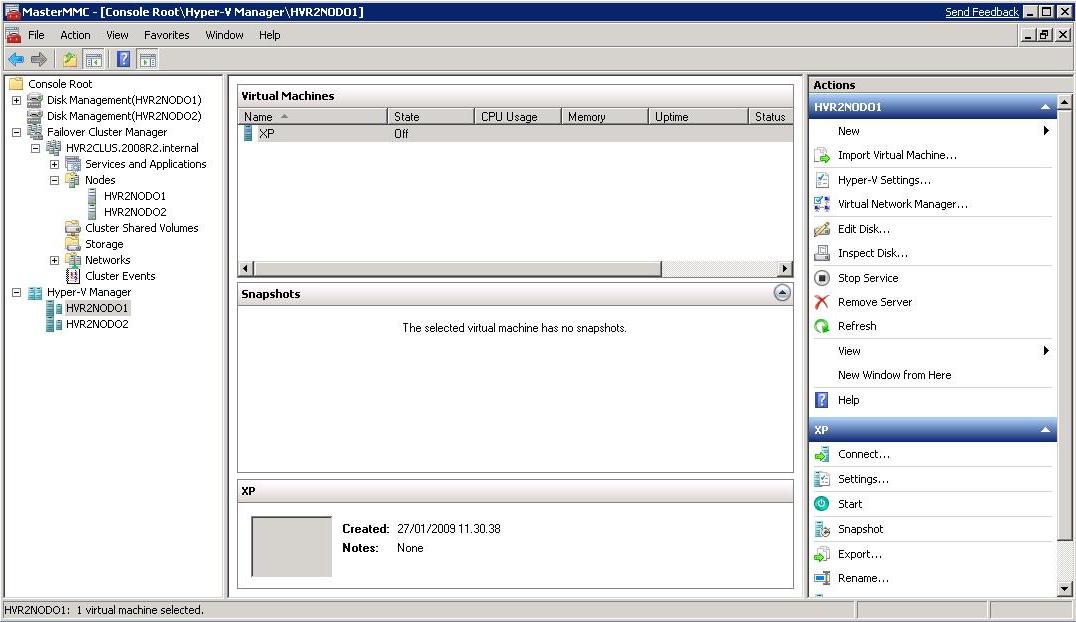

At first it seems to be odd to create a to-be-clustered virtual machine on a "C:" drive, but that's the way it works. Obviously the VM files won't be created on the local drive on the blade because, as I said, that path represents a location that is actually on the SAN. This is how our MasterMMC looks like in the end once we have done all this:

So far we have only created the VM. It's not yet clustered, as there is no integration between the Hyper-V hosts and the Failover Clustering applet, using the free management tools. Microsoft Virtual Machine Manager is supposed to provide this integrated view and operations, but as I said at the beginning, the currently shipping VMM version doesn't manage Hyper-V R2 Beta hosts yet. Besides, it would be beyond the scope of this document anyway. So in order to clusterize the VM we have to explicitly and manually declare this VM as a clustered resource. The steps are similar to how you would configure any cluster resource; just make sure you select "Virtual Machine" as a resource type and then you are presented with a list of VMs that are running on the cluster hosts (i.e. both Hyper-V R2 Beta servers). Notice that the virtual machine needs to be powered off to be clusterized (otherwise the wizard will fail).

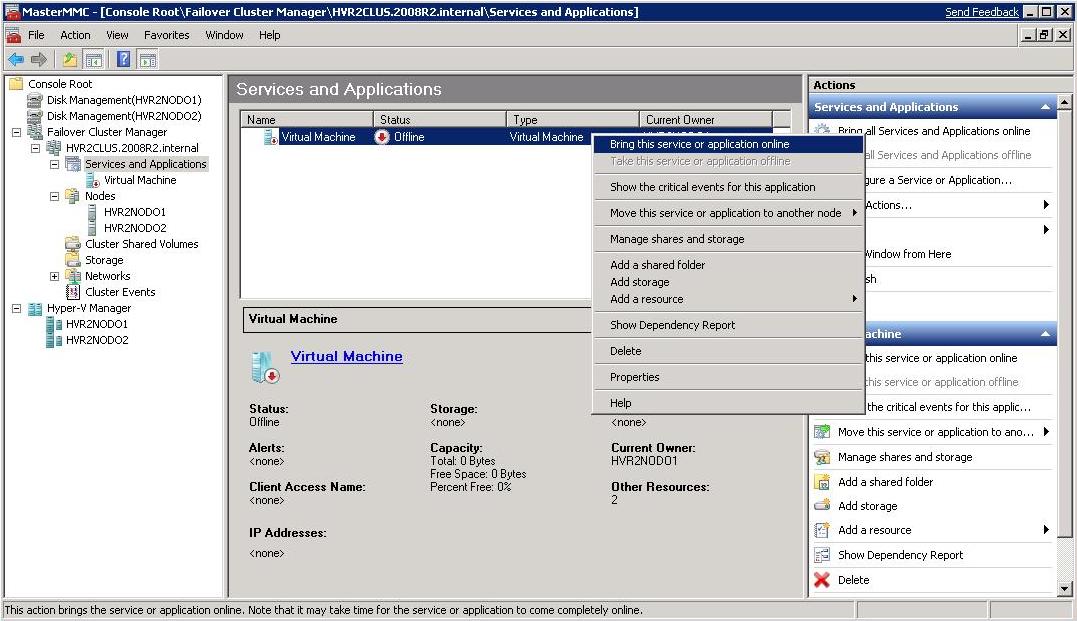

Once we have configured the resource we can bring the virtual machine on-line:

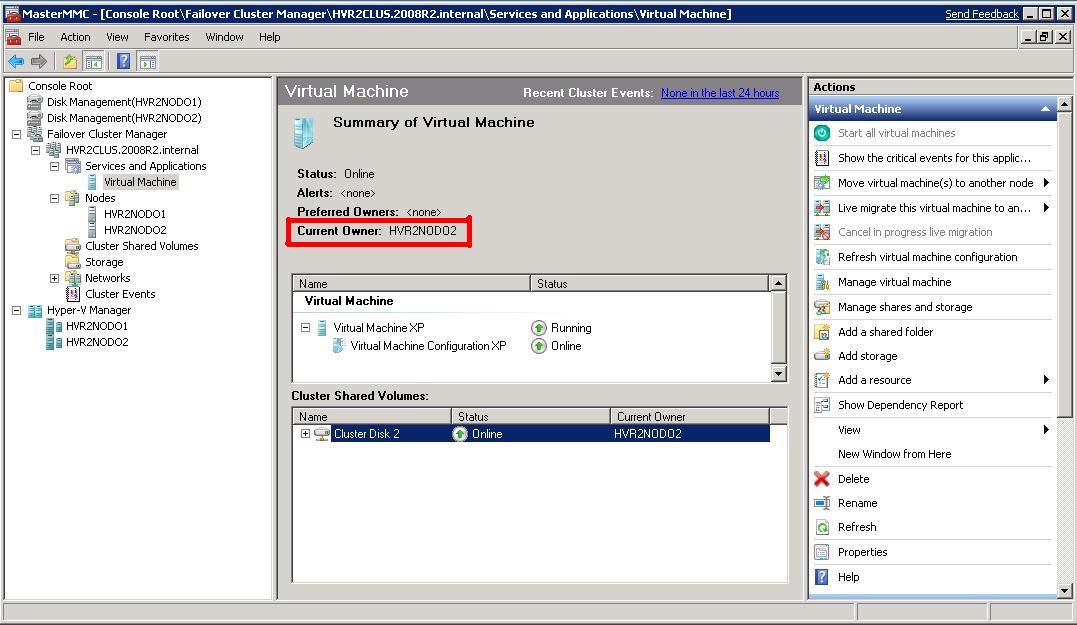

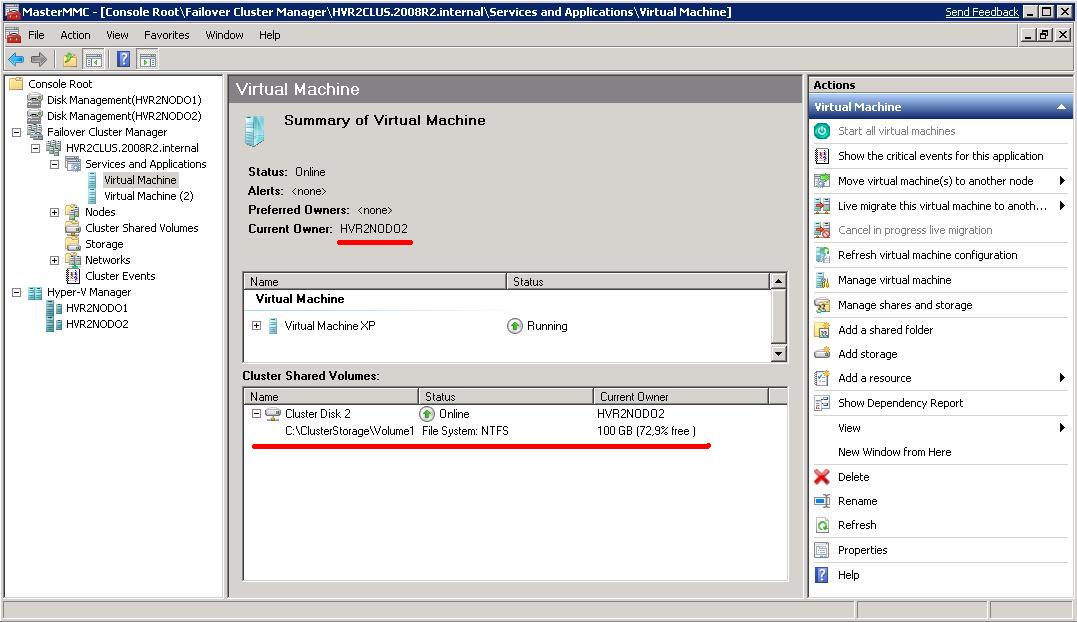

The resource (virtual machine) is now online and it's running on the second Hyper-V R2 node (HVR2NODO2) as you can see from the picture below:

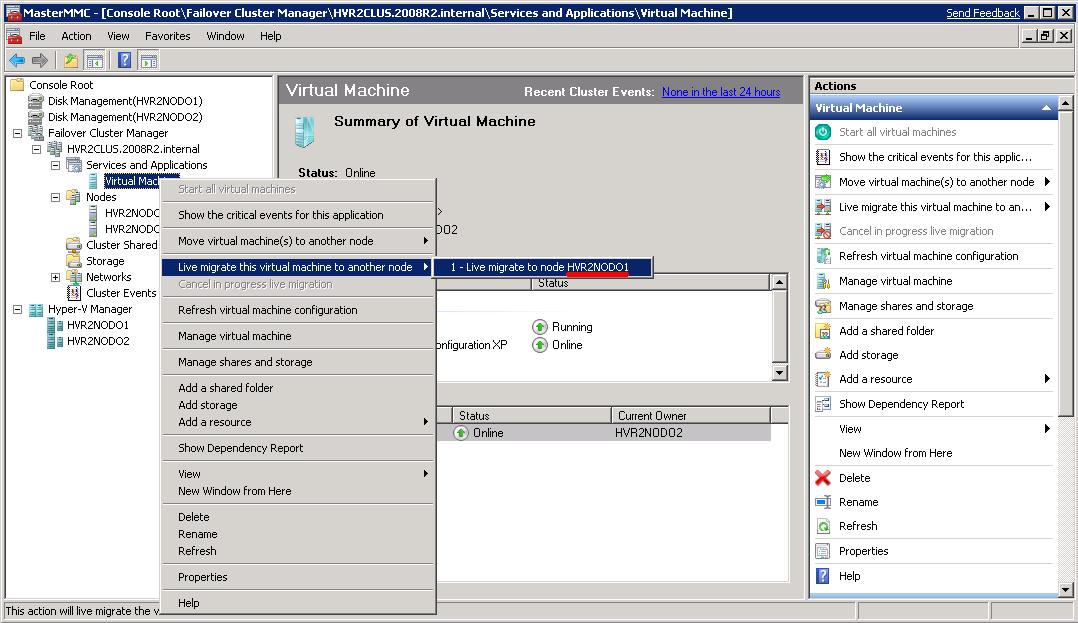

At this point you can invoke from the Failover Clustering interface a "Live Migration" of the resource as you can see below:

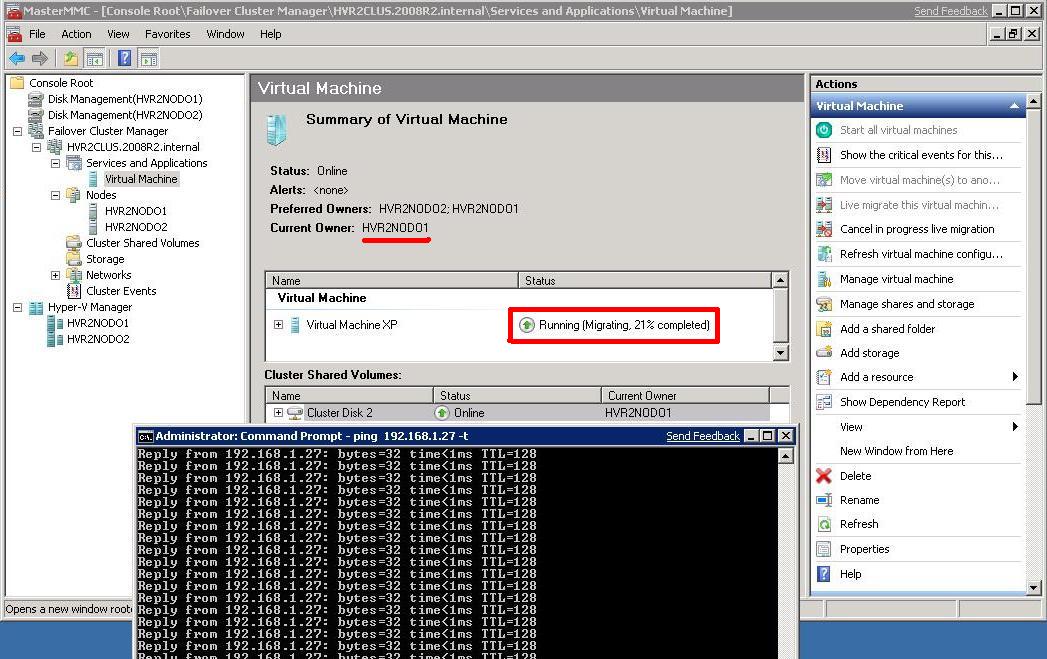

And the virtual machine will start the live migration onto the other host:

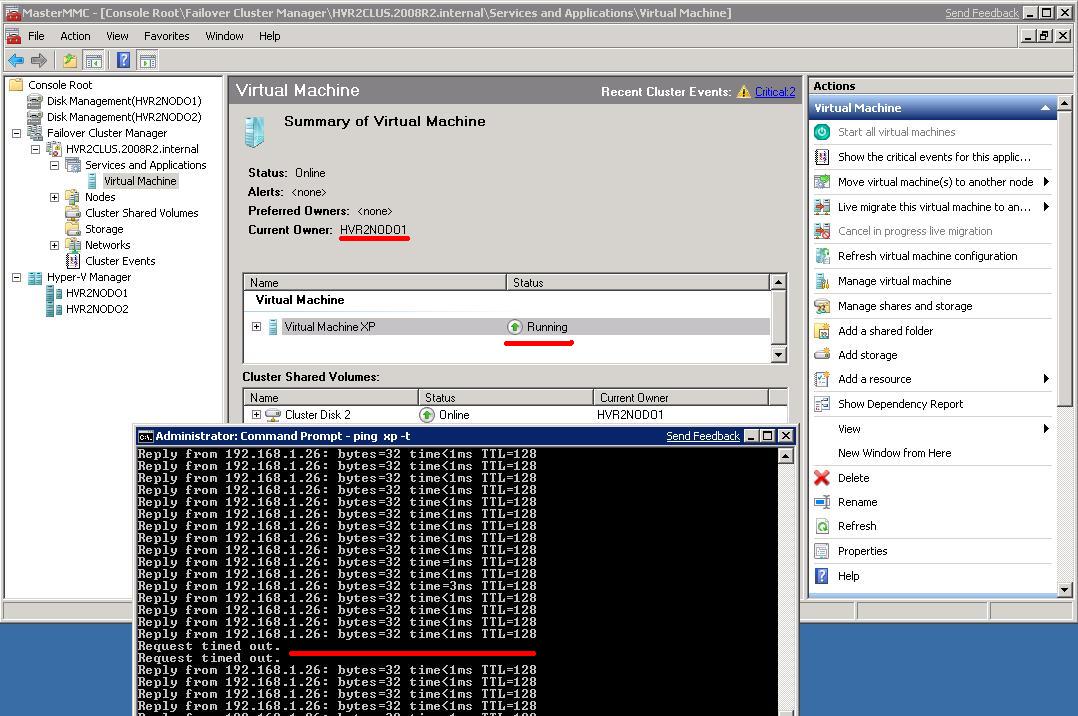

During my test I have been able to successfully move the virtual machine from one node to the other with basically no downtime except for a ping or two:

Consider the networking configuration might not be optimal, and we will have to see what Microsoft will suggest in terms of network subsystem setup in the context of Live Migrating a virtual machine. Having said this, loosing one or two pings is usually something most web and client/server applications would be able to handle, and it's not too much different from the experience you would have using alternative live migration technologies from other vendors such as VMware, Citrix, VirtualIron etc.

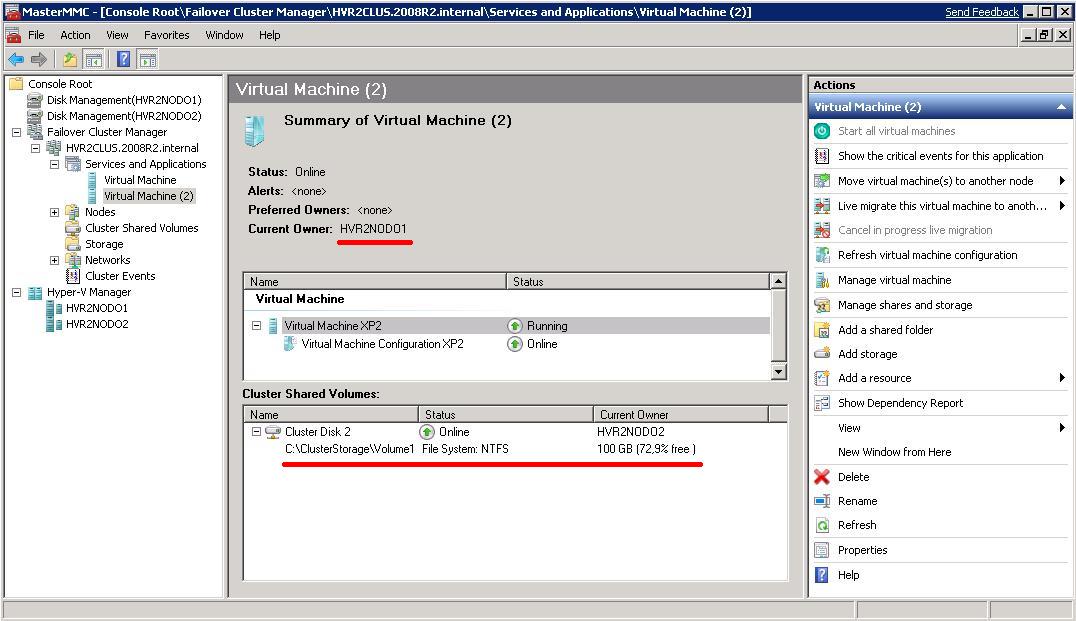

The last test of this proof of concept is to create another virtual machine and demonstrate that they could run simultaneously on the two Hyper-V R2 Beta hosts while insisting on a common shared LUN through the CSV technology. These are the screenshots of the two virtual machines running on different hosts but insisting on the same repository which is the CSV1 volume mapped on both hosts as "C:\ClusterStorage\Volume1":

There is one pretty interesting thing in these, if you noticed. Despite the fact that both nodes can access the CSV at the very same time (otherwise they couldn't simultaneously run two virtual machines hosted on the same volume), the actual LUN is, at any point in time, "officially owned" by one of the two nodes (in this case the owner of the LUN is always HVR2NODO2). I must admit I have to dig more into the CSVs but they seem to be arbitrated and controlled by one node at a time. My assumption is the cluster node that is NOT the owner of the LUN would not use the owner of the LUN as a proxy to get there because this would hurt substantially the disk access performance (i.e. one node has direct access while the other node has a pass-through access through the owner of the LUN - not a viable scenario). Somehow the other node (i.e. HVR2NODO1) has direct access to the LUN performance-wise but it also must coordinate access rights with the official owner of the LUN itself (that is HVR2NODO2).

In a scenario like this it would be interesting to understand what happens when the node that is the owner of the CSV crashes.

To recap, this is the summary of my current setup:

VirtualMachine ( ) is running on HVR2NODO2 and CSV owner is HVR2NODO2

VirtualMachine (2) is running on HVR2NODO1 and CSV owner is HVR2NODO2

In a cluster file system environment, if HVR2NODO2 fails, VirtualMachine(2) would continue to run on the other node (HVR2NODO1) without any interruption and VirtualMachine( ) would go off-line to restart on the same surviving node (HVR2NODO1).

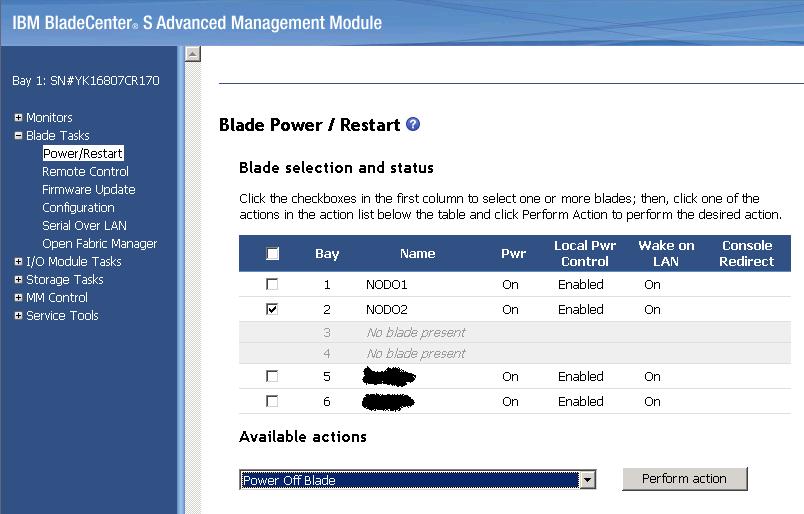

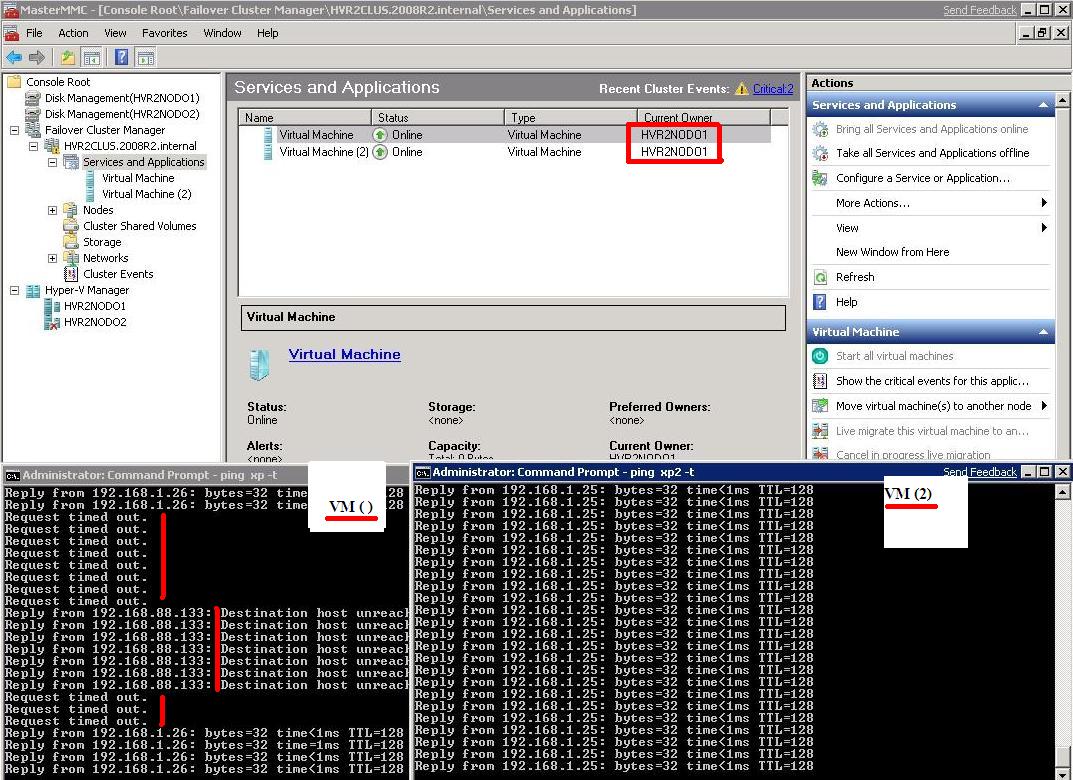

So I turned off blade #2 in the chassis (which is HVR2NODO2) via the remote BladeCenter S Management Module (MM):

VirtualMachine(2) didn't experience any issue both from either a ping perspective or a Failover Cluster Manager notification. This would lead me to think that CSV ownership would change transparently without any service interruption. This was somewhat expected and the only point of concern was the ownership of the CSV (which apparently can be managed in a smart way). However, the other virtual machine experienced downtime. This was expected as well, since VirtualMachine( ) was running on HVR2NODO2 which was turned off "in the hard way" so the failover algorithms had to kick in to bring it back on-line on the surviving node (HVR2NODO1) with a standard boot-up procedure.

Notice that the ping window first loses the link, then it starts to get a host destination unreachable message from the local IP address (192.168.88.133 is the host from which I am pinging). Eventually it starts to ping the guest again once it's brought back on-line.

Preliminary Conclusions and Impressions

As I said at the beginning, I will write another piece on what I think the implications of these technologies will be in the market. From what I have seen so far, the Hyper-V R2 platform seems to be pretty stable (once I got passed some weird issues with the Remote Disk Management stuff). Let's not forget that we will not see these technologies before year end 2009 or the beginning of 2010. This is the common speculation in the industry, anyway. While this will allow plenty of time for Microsoft to fix these problems, the fact that these are still one year away will give VMware some time to think about their main competitor.... although I am sure all this is already on their radar in Palo Alto.

There are a number of aspects in the Microsoft technologies that I think are a long way from catching up with what VMware is doing. VMware had the advantage of starting to develop a true virtualization platform from a blank sheet. Microsoft, on the other hand, has a legacy of technologies, so virtualization for Microsoft seems more hammered-in than anything else. An example is the fact that when you create a Virtual Machine from the Hyper-V Manager, the default location is "C:\ProgramData\Microsoft\Windows\Hyper-V", which is not what I would define as a proper default location for hosting enterprise workloads (in fact, it looks more like a Microsoft Office document default location). This might sound simple, but it tells you a lot about the heritage Microsoft wants and needs to protect.

That's pretty much it for the negative part. As far as the positive aspects are concerned, everything you have seen here (except the BladeCenter S and the Windows guests!) is all software that is free of charge. And this is not a trivial aspect or something to overlook.

Massimo.