Is AWS Slowing Down Due to Lack of Demand Rather Than Lack of Ideas?

I was surfing the web (as usual) a few days ago and an AWS presentation I spotted on SlideShare got my attention.

Before I even begin, remember I (currently) work for VMware. I always try, on this blog, to be as open as possible and talk freely about what I really think.

However feel free to turn on your bias filter if you don't trust me.

Back to the main topic, there isn't much new in that slide deck and it basically summarizes the successful AWS story.

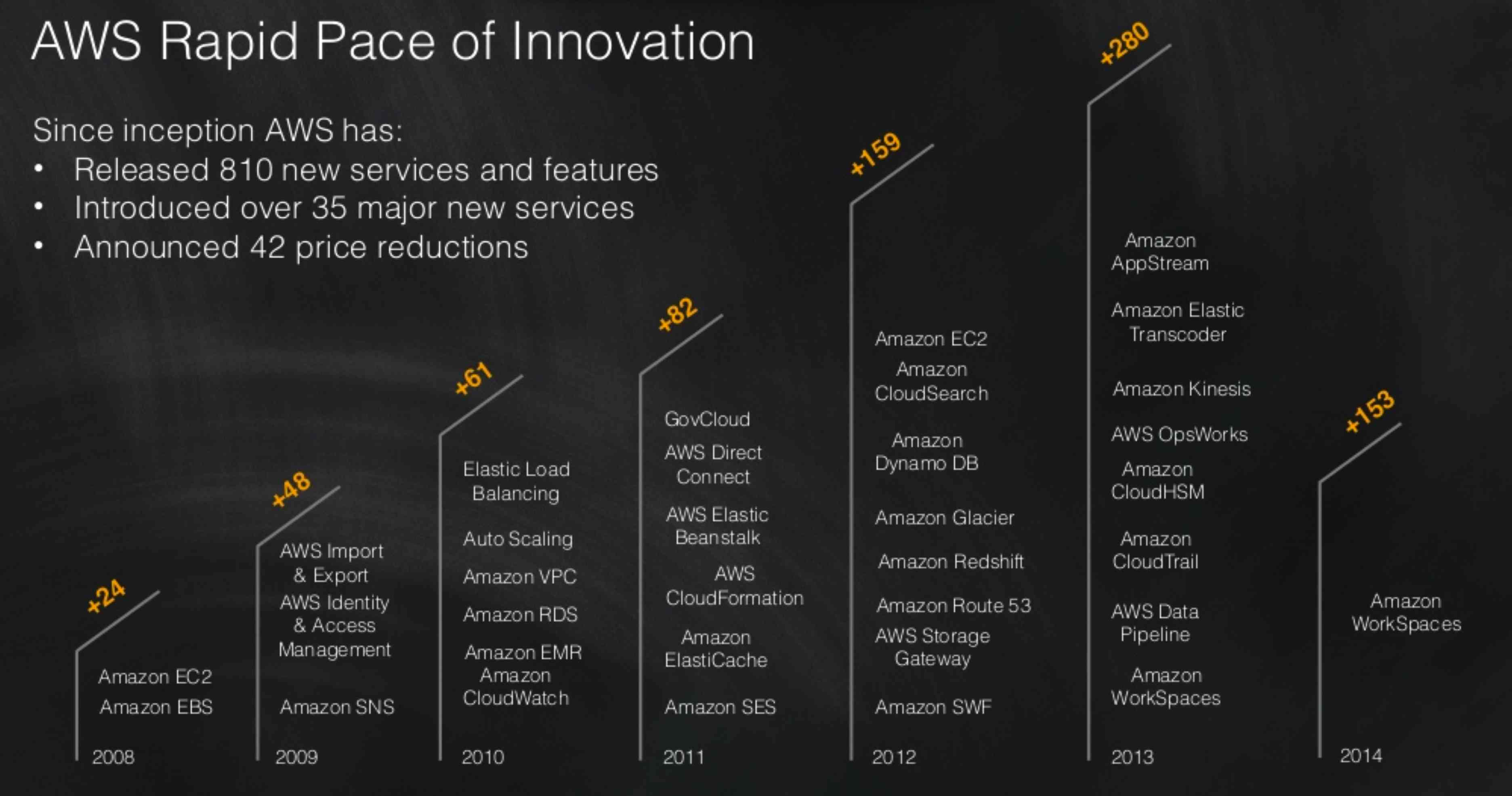

However, what intrigued me (big time) was slide #23:

It's June 2014, half way through the year, and AWS only introduced one new service (which, by the way, was announced in 2013 as you can depict from the... 2013 column).

Surely AWS isn't going to lose their "king of public cloud" crown any time soon but, nevertheless, these dynamics are interesting (particularly in the context of things like... Amazon's cloud reign may soon come to an end, says Gartner).

So what's going on here then? There are a few data points (or I should say personal points of view as these are largely my own interpretations) that would be interesting to mention before we jump to the ultimate conclusion speculation.

-

In 2012 I wrote a blog post whose title is AWS: a Space Shuttle to Go Shopping? where I alluded to the fact that the majority of AWS customers seem to be very basic in terms of use cases and deployment models (the Netflix anti-pattern so to speak). In particular what stood out from that research is that EC2, EBS, S3 and RDS accounts for the majority of what customers spend with AWS. That is to say that Amazon could have stopped the development of their web services offering in 2010 (when they announced RDS, all the others have been announced previously), and still make pretty much the same amount of money. Well, ok sort of but try to picture "money logos" on the slide above and see where they stick.

-

Last year I wrote a (controversial) blog post whose title is Cloud and the Three IT Geographies (Silicon Valley, US and Rest of the World) where I alluded to the fact that there is a huge lag in the industry between the leaders and the followers. For one Netflix, there are hundreds of organizations still doing baby steps to evolve their IT. Similarly to the point in the paragraph above, the conclusion I am getting to is that the more exotic things you add to your services portfolio the bigger this lag becomes and the fewer (visionaries) can take advantage of it. All this while the others (followers and majority) are still trying to figure out the basics of cloud.

-

While there are a lot of people that are going all-in with public clouds and are using all available "add-on" services to gain gigantic productivity gains, there is a growing movement that advocates about using the "least common denominator" of features across diverse public cloud providers to avoid lock-in. For the records I sympathize with the former category as I think lock-in is inevitable (as I wrote in 2012 in a blog post called The ABC of Lock-In). However one cannot neglect that there are a lot of people that are thinking along the lines of "I don't want to be locked-in". I have met with many customers, or public cloud prospects, that clearly told me they don't want (for example) a "message and queue service". They want an instance (as a service) with Linux on top of which they want to load (and fully control) their "message and queue software" of choice. This will allow them to move from AWS to Azure to GCE to Rackspace to vCHS to whatever... with minimal disruption to their operations. I am not debating whether this is the right approach. I am saying this is an approach that seems to be getting momentum (obviously pushed also by vendors that provide "cloud agnostic" tooling). Assuming 50% of the people are willing to go "all in" and the other 50% want to take a more cautious "least common denominator approach" to public cloud consumption, this essentially cuts in half the TAM for the services in the rightmost part of the slide above.

If the above makes some sort of sense, the conclusion speculation I am getting to is that AWS is slowing down due to lack of demand rather than lack of ideas.

Does it make sense to keep pushing the bar when you know that 1) you make the bulk of your money with 4 basic services, 2) the majority of the organizations are lagging behind light-years when it comes to consume simple public cloud services, go figure advanced and rich public cloud services and 3) the more advanced and rich services you make available the more lock-in concerns you raise (and ultimately the less people you are going to appeal)?

The rule in this business (or any business for that matter) is that if you invest x amount of $ in developing a new product or service you should at least make an amount of associated revenue that off-set the investment (and, incidentally, should also provide profits if possible).

What if this theory is the reason behind slide #23?

What if Amazon is rather spending their money to revisit the existing core services to make them appeal Enterprises (in addition of startups and developers which seem to be the current target)?

What if Amazon is working on making AWS a better place for pets rather than just for cattle? Perhaps they sniffed where the money are? An obvious tweak they could introduce is to make "availability" a property of the infrastructure and not of the application. Clearly against every "true cloud patterns" they have been advocating so far, but still the only way to attract, in the short term, 4 Trillion $ per year (literally) that are going into "traditional IT" today.

Unfortunately slide #24 of the aforementioned AWS presentation doesn't give us a clear picture if this is happening or not in the existing set of services. To much of my surprise, most of the "call outs" of new features introduced in 2014 are related to the availability of the existing features in new AWS regions.

As someone that have been working, for the last 6 months, to expand the global footprint of the cloud service operated by my employer I am not trying to diminish the value of a true global service (to the contrary, I think this is one of the biggest strength AWS has among others) but still these do not seem to be a lot of "new features" strictly speaking:

Or perhaps Amazon is going to announce 9 new major services in the next 6 months to keep the pace and all this blog post (with its associated speculations) will be history.

If this bizarre theory of mine is true it will be interesting to see how this is going to shape going forward if the leader "needs" to stop introducing new differentiating services while the pack of followers keeps coming closer and closer.

All this while this cloud thing is still a nascent trend and not an established deployment model.

We can only wait and see. The only thing for sure is that we live in interesting times.

Massimo.